Computational Graphs in Deep Learning With Python

Machine Learning courses with 100+ Real-time projects Start Now!!

Master Python with 70+ Hands-on Projects and Get Job-ready - Learn Python

In this Deep Learning With Python tutorial, we will tell you about computational graphs in Deep Learning. We will show you how to implement those Computational graphs with Python. Moreover, while implementing Deep Learning Computational Graphs in Python, we will look at dynamics and Forward-Backward Propagation.

So, let’s begin Computation Graphs in Deep Learning With Python.

Deep Learning Computational Graphs

In fields like Cheminformatics and Natural Language Understanding, it is often useful to compute over data-flow graphs. Computational Graphs form an integral part of Deep Learning.

Not only do they help us simplify working with large datasets, but they’re simple to understand. So in this tutorial, we will introduce them to you and then show you how to implement them using Python. For this, we will use the Dask library from Python PyPI.

Benefits of learning computational graphs:

- Auto calculations: It automatically handles hard math calculations for you.

- Speed: It can run multiple parts of maths at the same time by using the CPU power.

- Efficiency: It can combine all small steps into one big step to make the program run faster.

- Easy fix: It guides you to the exact place where the data has been messed up.

Do you know about the Python Library?

What are Computational Graphs in Deep Learning?

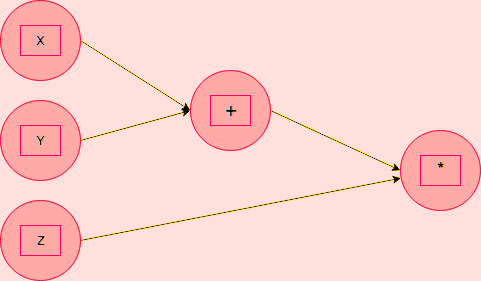

A computational graph is a way to represent a mathematical function in the language of graph theory. Nodes are input values or functions for combining them; as data flows through this graph, the edges receive their weights.

So we said we have two kinds of nodes- input nodes and function nodes. Outbound edges from input nodes bear the input value, and those from function nodes bear the composite of the weights of the inbound edges. The graph above represents the following expression:

f(x,y,z)=(x+y)*z

Have a look at Deep Learning vs Machine Learning

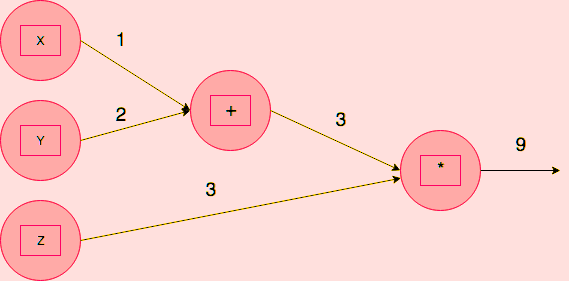

Of the five nodes, the leftmost three are input nodes; the two on the right are function nodes. Now, if we had to compute f(1,2,3), we’d get the following computation graph-

Need for Computational Graph

Well, this was a simple computational graph with 5 nodes and 5 edges. But even simpler deep neural networks observe hundreds of thousands of nodes and edges- say, more than one million? In such a case, it would be practically impossible to calculate a function expression for it. Then, computational graphs come in handy.

Such graphs also help us describe backpropagation more precisely.

Composite Function in Computational Graphs

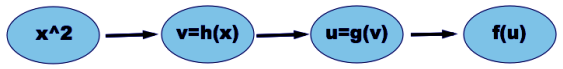

We take an example of the function f(x)=esin(x**2). Let’s decompose this-

f(x)=ex

g(x)=sin x

h(x)=x2

f(g(h(x)))=eg(h(x))

Let’s revise the Python Machine Learning Tutorial

We have the following computational graph for this-

Visualizing a Computation Graph in Python

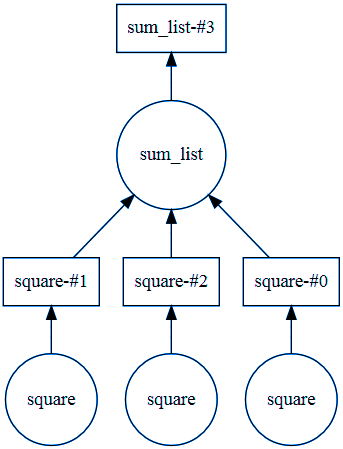

Now let’s use the Dask library to produce such a graph.

>>> from dask import delayed,compute

>>> import dask

>>> @delayed

def square(num):

print("Square function:",num)

print()

return num*num

>>> @delayed

def sum_list(args):

print("Sum_list function:",args)

return sum(args)

>>> items=[1,2,3]

>>> computation_graph = sum_list([square(i) for i in items])

>>> computation_graph.visualize()<IPython.core.display.Image object>

>>> computation_graph.visualize(filename='sumlist.svg')

<IPython.core.display.SVG object>

Note that for this code to work, you will need to perform three tasks-

1. Install the dask library with pip:

pip install dask

2. Download and unzip the Windows packages for Dask-

https://graphviz.gitlab.io/_pages/Download/Download_windows.html

3. Add the first line of code to your User Path and the next to your system path in environment variables:

C:\Users\Ayushi\Desktop\graphviz-2.38\release\bin (Use your own path for bin)

C:\Users\Ayushi\Desktop\graphviz-2.38\release\bin\dot.exe

Dynamic Deep Learning Python Computational Graphs

DCGs suffer from the issues of inefficient batching and poor tooling. When each data point in a data set has its type or shape, it becomes a problem for the neural network to batch such data with a static graph. As a workaround, we use an algorithm we call Dynamic Batching.

In other words, a Dynamic Computational Graph is a mutable directed graph with operations as vertices and data as edges. In effect, it is a system of libraries, interfaces, and components. These deliver a flexible, programmatic, runtime interface that lets us construct and modify systems by connecting operations.

Have a look at the Python Machine Learning Environment Set up

Forward and Backward Propagation in Computational Graphs

First, let’s talk about forward propagation. Here, we loop over nodes in a topological order. In other words, we pass the values of the variables in the forward direction (left to right). Given a node’s inputs, we compute its value.

In backward propagation, however, we start at a final goal node and loop over the nodes in a reverse topological order. Here, we compute the derivatives of the final goal node value with respect to each edge’s tail node.

Another use of computational graphs is that they can be used in debugging deeply layered deep learning models. When developers sketch the movement of data and functions, they notice inefficiencies and glitches more quickly. This transparency is important in improving models and guaranteeing the right outcome.

In addition, computational graphs are useful in guiding the training process in order to make it efficient. Graphs in these techniques, like automatic differentiation or gradient descent, for instance, use such computational graphs in order to compute gradients and update the model parameters. This optimization is crucial in training large models accurately and within acceptable time frames.

So, this was all in Computational Graphs Deep Learning With Python. Hope you like our explanation.

Conclusion

Computational graphs are like maps that show how data flows through a deep learning model. They help break down complex math into small, easy steps. In deep learning, every operation—like adding, multiplying, or applying a function—is a part of this graph. These steps connect like a chain, and this full chain is called the computational graph. Python libraries like TensorFlow and PyTorch use this concept to train models faster and more efficiently.

Hence, we wind up computational graphs for deep learning with Python. Moreover, we discussed Computational Graphs Propagation and implementing graphs in Python. Furthermore, if you have any queries, feel free to ask in the comment box.

See also –

Deep Learning With Python Applications

For reference

Did you like our efforts? If Yes, please give DataFlair 5 Stars on Google