What is Heuristic Search – Techniques & Hill Climbing in AI

Machine Learning courses with 100+ Real-time projects Start Now!!

Master Python with 70+ Hands-on Projects and Get Job-ready - Learn Python

In this Python AI tutorial, we will discuss the rudiments of Heuristic Search, which is an integral part of Artificial Intelligence.

We will talk about different techniques like Constraint Satisfaction Problems, Hill Climbing, and Simulated Annealing. Also, we will implement CSP in Python.

So, let’s begin Heuristic Search in AI Tutorial.

What is a Heuristic Search?

Heuristic search is a method in AI that helps find the best path or solution quickly. Instead of checking all options, it uses rules or guesses to find answers faster. Python helps us write programs that use heuristic search in games, robots, and planning tools.

Python supports many search algorithms like A*, Greedy Search, and Hill Climbing. These methods are used to solve problems like puzzle games, route planning, and decision-making in real-time systems. Libraries like networkx help visualize search paths and graphs. Heuristics make AI programs smarter by reducing the time and effort needed to reach the goal.

Using Python for heuristic search lets you explore problems where the solution is hidden in a big space. For example, in a maze, you don’t need to try every path. Heuristics guide the program to look where the solution is most likely to be. This makes the process fast and efficient, saving memory and time.

So why do we need heuristics? One reason is to produce, in a reasonable amount of time, a solution that is good enough for the problem in question. It doesn’t have to be the best- an approximate solution will do since this is fast enough.

Most problems are exponential. Heuristic Search let us reduce this to a rather polynomial number. We use this in AI because we can put it to use in situations where we can’t find known algorithms.

We can say Heuristic Techniques are weak methods because they are vulnerable to combinatorial explosion.

Heuristic Search Techniques in Artificial Intelligence

Briefly, we can taxonomize such techniques of Heuristic into two categories:

a. Direct Heuristic Search Techniques in AI

Other names for these are Blind Search, Uninformed Search, and Blind Control Strategy. These aren’t always possible since they demand much time or memory.

They search the entire state space for a solution and use an arbitrary ordering of operations. Examples of these are Breadth First Search (BFS) and Depth First Search (DFS).

b. Weak Heuristic Search Techniques in AI

Other names for these are Informed Search, Heuristic Search, and Heuristic Control Strategy. These are effective if applied correctly to the right types of tasks and usually demand domain-specific information.

We need this extra information to compute preference among child nodes to explore and expand. Each node has a heuristic function associated with it. Examples are Best First Search (BFS) and A*.

Before we move on to describe certain techniques, let’s first take a look at the ones we generally observe. Below, we name a few.

- Best-First Search

- A* Search

- Bidirectional Search

- Tabu Search

- Beam Search

- Simulated Annealing

- Hill Climbing

- Constraint Satisfaction Problems

Hill Climbing in Artificial Intelligence

First, let’s talk about Hill Climbing in Artificial Intelligence. This is a heuristic for optimizing problems mathematically. We need to choose values from the input to maximize or minimize a real function. It is okay if the solution isn’t the global optimal maximum.

One such example of Hill Climbing will be the widely discussed Travelling Salesman Problem- one where we must minimize the distance he travels.

a. Features of Hill Climbing in AI

Let’s discuss some of the features of this algorithm (Hill Climbing):

- It is a variant of the generate-and-test algorithm

- It makes use of the greedy approach

This means it keeps generating possible solutions until it finds the expected solution, and moves only in the direction that optimizes the cost function for it.

b. Types of Hill Climbing in AI

- Simple Hill Climbing- This examines one neighboring node at a time and selects the first one that optimizes the current cost to be the next node.

- Steepest Ascent Hill Climbing- This examines all neighboring nodes and selects the one closest to the solution state.

- Stochastic Hill Climbing- This selects a neighboring node at random and decides whether to move to it or examine another.

Let’s take a look at the algorithm for simple hill climbing.

- Evaluate initial state- if goal state, stop and return success. Otherwise, make the initial state current.

- Loop until the solution is reached or until no new operators are left to apply to the current state:

a. Select a new operator to apply to the current producing new state.

b. Evaluate new state:

- If a goal state, stop and return success.

- If better than the current state, make it the current state, proceed.

- Even if not better than the current state, continue until the solution is reached.

- Exit.

c. Problems with Hill Climbing in AI

We usually run into one of three issues-

- Local Maximum- All neighboring states have values worse than the current. The greedy approach means we won’t be moving to a worse state. This terminates the process even though there may have been a better solution. As a workaround, we use backtracking.

- Plateau- All neighbors to it have the same value. This makes it impossible to choose a direction. To avoid this, we randomly make a big jump.

- Ridge- At a ridge, movement in all possible directions is downward. This makes it look like a peak and terminates the process. To avoid this, we may use two or more rules before testing.

Constraint Satisfaction Problems (CSP) in AI

A constraint is nothing but a limitation or a restriction. Working with AI, we may need to satisfy some constraints to solve problems. Let’s try solving a problem this way, shall we?

Common applications of CSP include:

- Scheduling: It assigns resources like employees and equipment at the right time and when it is available.

- Planning: It helps in organising tasks in sequence and with specific deadlines.

- Allocation of resources: Without any overuse, the resources are distributed efficiently.

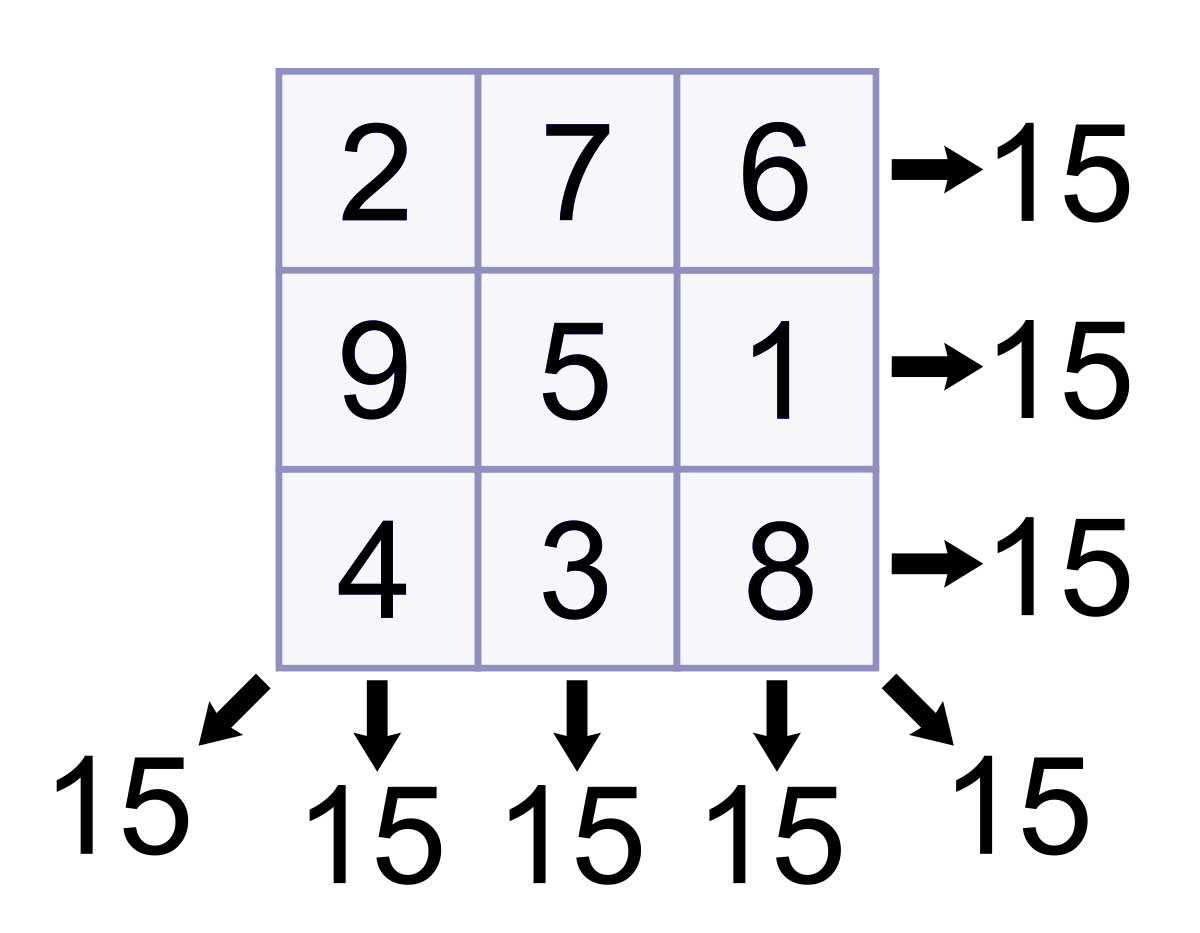

Let’s talk about a magic square. This is a sequence of numbers- usually integers- arranged in a square grid. The numbers in each row, each column, and each diagonal all add up to a constant, which we call the Magic Constant. Let’s implement this with Python.

def magic_square(matrix):

size=len(matrix[0])

sum_list=[]

for col in range(size): #Vertical sum

sum_list.append(sum(row[col] for row in matrix))

sum_list.extend([sum(lines) for lines in matrix])#Horizontal sum

result1=0

for i in range(0,size):

result1+=matrix[i][i]

sum_list.append(result1)

result2=0

for i in range(size-1,-1,-1):

result2+=matrix[i][i]

sum_list.append(result2)

if len(set(sum_list))>1:

return False

return TrueNow let’s run this code.

>>> magic_square([[1,2,3],[4,5,6],[7,8,9]])

False

This is not a magic square. The numbers in the rows/columns/diagonals do not add up to the same value. Let’s try another list of lists.

>>> magic_square([[2,7,6],[9,5,1],[4,3,8]])

True

Since the values add up to the constant 15 in all directions, surely, this is a magic square!

Simulated Annealing Heuristic Search in AI

In metallurgy, when we slow-cool metals to pull them down to a state of low energy gives them exemplary amounts of strength. We call this annealing. While high temperatures exhibit much random movement, low temperatures show little randomness.

In AI, we take a cue from this to produce something called simulated annealing. This is a way of optimization where we begin with a random search at a high temperature and reduce the temperature slowly.

Eventually, as the temperature approaches zero, the search becomes pure greedy descent. At each step, this process randomly selects a variable and a value. It accepts the assignment only when it is an improvement or doesn’t lead to more conflict.

If not, it checks if the temperature is much worse than the current assignment to accept the assignment with some probability.

An annealing schedule defines how the temperature drops as the search progresses. A very common schedule is geometric cooling. If we begin with a temperature of 10 and multiply by 0.97 after every step, then after 100 steps, we’re left with a temperature of 0.48.

Best-First Search (BFS) in AI

Often dubbed BFS, Best First Search is an informed search that uses an evaluation function to decide which adjacent is the most promising before it can continue to explore. Breadth- and Depth- First Searches blindly explore paths without keeping a cost function in mind.

Things aren’t the same with BFS, though. Here, we use a priority queue to store node costs. Let’s understand BFS Heuristic Search through pseudocode.

- Define list OPEN with a single node s– the start node.

- If the list is empty, return failure.

- Remove node n (node with best score) from the list, and move it to list CLOSED.

- Expand node n.

- IF any successor to n is the goal node, return success and trace the path from the goal node to s to return the solution.

- FOR each successor node:

- Apply the evaluation function f.

- IF the node isn’t in either list, add it to list OPEN.

- Loop to step 2.

So, this was all in Heuristic Search Techniques in AI. Hope you like our explanation.

Conclusion

Hence, in this Python AI tutorial, we discussed the Heuristic Search in AI. While we named a few techniques that fall under that, we also discussed, in brief, those like BFS, Hill Climbing, Simulated Annealing, and CSP.

We also implemented CSP in Python. Still, if you have any queries about Heuristic Search Techniques, feel free to ask in the comments tab.

We work very hard to provide you quality material

Could you take 15 seconds and share your happy experience on Google

How to solve shuffled frog leaping algorithm (SFLA) through python ,i am asking about implementation of coding SFLA in python?

You have to study the algorithm first and then you can use any IDE to implement it using python concepts that we have studied so far.

explanation was too good

Why the hell you people can’t let the reader concentrate?, popping up completely out of the subject topics in between!! WHEN You can give them at the bottom of the page!