Python Chatbot Project – Learn to build your first chatbot using NLTK & Keras

Free Machine Learning courses with 130+ real-time projects Start Now!!

Hey Siri, What’s the meaning of Life?

As per all the evidence, it’s chocolate for you.

Soon as I heard this reply from Siri, I knew I found a perfect partner to savour my hours of solitude. From stupid questions to some pretty serious advice, Siri has been always there for me.

How amazing it is to tell someone everything and anything and not being judged at all. A top class feeling it is and that’s what the beauty of a chatbot is.

This is the 9th project in the 20 Python projects series by DataFlair and make sure to bookmark other interesting projects:

- Fake News Detection Python Project

- Parkinson’s Disease Detection Python Project

- Color Detection Python Project

- Speech Emotion Recognition Python Project

- Breast Cancer Classification Python Project

- Age and Gender Detection Python Project

- Handwritten Digit Recognition Python Project

- Chatbot Python Project

- Driver Drowsiness Detection Python Project

- Traffic Signs Recognition Python Project

- Image Caption Generator Python Project

What is Chatbot?

A chatbot is an intelligent piece of software that is capable of communicating and performing actions similar to a human. Chatbots are used a lot in customer interaction, marketing on social network sites and instantly messaging the client. There are two basic types of chatbot models based on how they are built; Retrieval based and Generative based models.

1. Retrieval based Chatbots

A retrieval-based chatbot uses predefined input patterns and responses. It then uses some type of heuristic approach to select the appropriate response. It is widely used in the industry to make goal-oriented chatbots where we can customize the tone and flow of the chatbot to drive our customers with the best experience.

2. Generative based Chatbots

Generative models are not based on some predefined responses.

They are based on seq 2 seq neural networks. It is the same idea as machine translation. In machine translation, we translate the source code from one language to another language but here, we are going to transform input into an output. It needs a large amount of data and it is based on Deep Neural networks.

About the Python Project – Chatbot

In this Python project with source code, we are going to build a chatbot using deep learning techniques. The chatbot will be trained on the dataset which contains categories (intents), pattern and responses. We use a special recurrent neural network (LSTM) to classify which category the user’s message belongs to and then we will give a random response from the list of responses.

Let’s create a retrieval based chatbot using NLTK, Keras, Python, etc.

Download Chatbot Code & Dataset

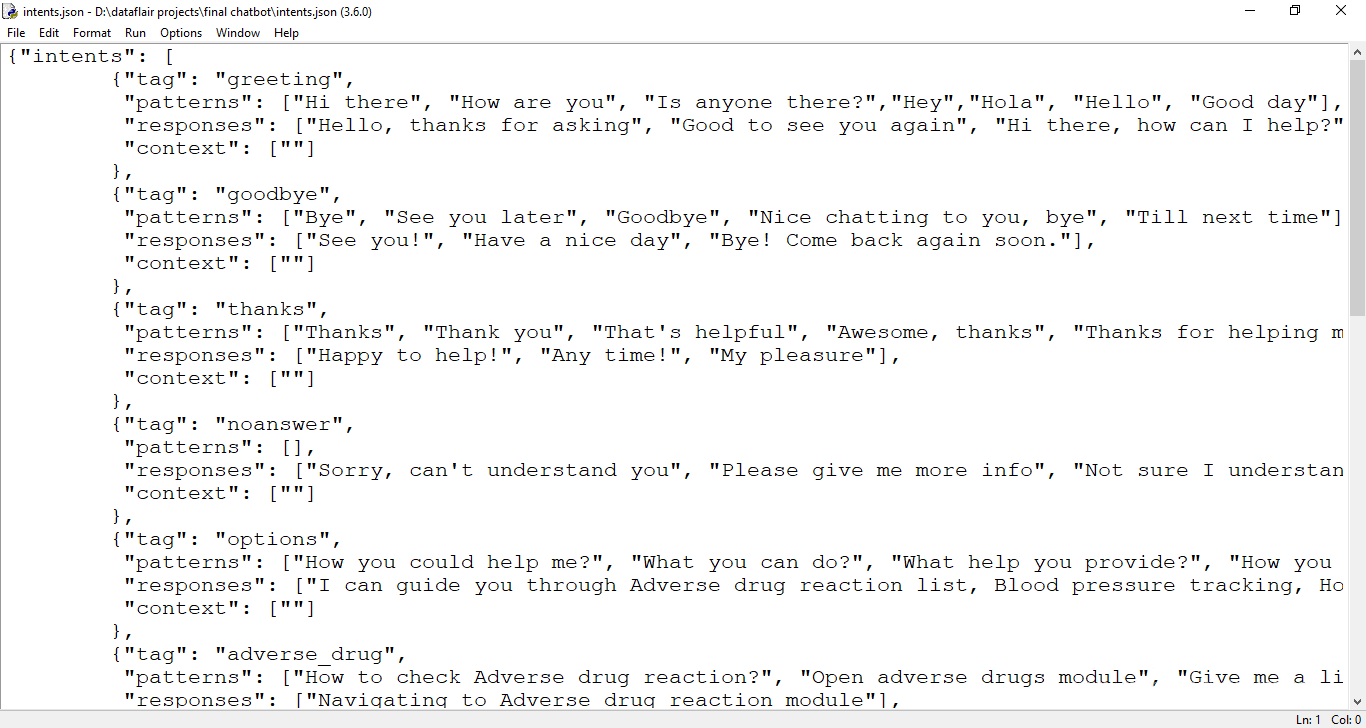

The dataset we will be using is ‘intents.json’. This is a JSON file that contains the patterns we need to find and the responses we want to return to the user.

Please download python chatbot code & dataset from the following link: Python Chatbot Code & Dataset

Prerequisites

The project requires you to have good knowledge of Python, Keras, and Natural language processing (NLTK). Along with them, we will use some helping modules which you can download using the python-pip command.

pip install tensorflow, keras, pickle, nltk

How to Make Chatbot in Python?

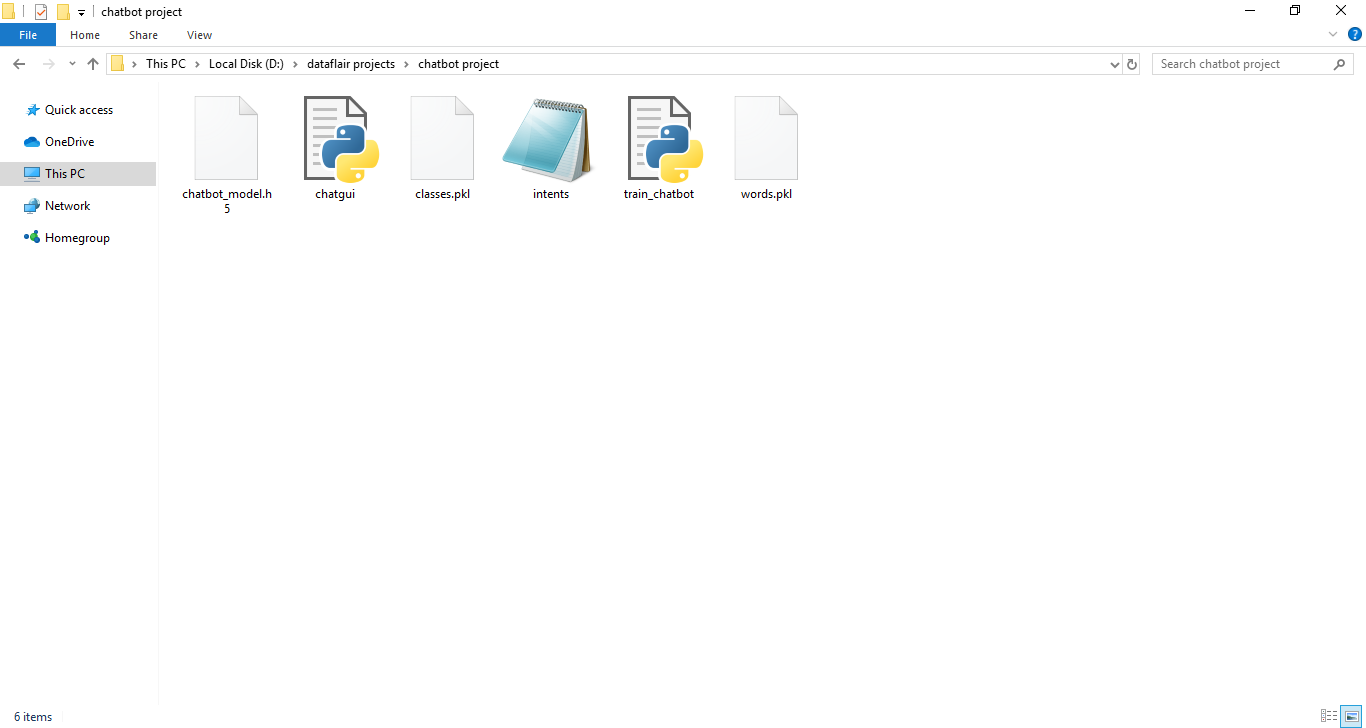

Now we are going to build the chatbot using Python but first, let us see the file structure and the type of files we will be creating:

- Intents.json – The data file which has predefined patterns and responses.

- train_chatbot.py – In this Python file, we wrote a script to build the model and train our chatbot.

- Words.pkl – This is a pickle file in which we store the words Python object that contains a list of our vocabulary.

- Classes.pkl – The classes pickle file contains the list of categories.

- Chatbot_model.h5 – This is the trained model that contains information about the model and has weights of the neurons.

- Chatgui.py – This is the Python script in which we implemented GUI for our chatbot. Users can easily interact with the bot.

Here are the 5 steps to create a chatbot in Python from scratch:

- Import and load the data file

- Preprocess data

- Create training and testing data

- Build the model

- Predict the response

1. Import and load the data file

First, make a file name as train_chatbot.py. We import the necessary packages for our chatbot and initialize the variables we will use in our Python project.

The data file is in JSON format so we used the json package to parse the JSON file into Python.

import nltk

from nltk.stem import WordNetLemmatizer

lemmatizer = WordNetLemmatizer()

import json

import pickle

import numpy as np

from keras.models import Sequential

from keras.layers import Dense, Activation, Dropout

from keras.optimizers import SGD

import random

words=[]

classes = []

documents = []

ignore_words = ['?', '!']

data_file = open('intents.json').read()

intents = json.loads(data_file)This is how our intents.json file looks like.

2. Preprocess data

When working with text data, we need to perform various preprocessing on the data before we make a machine learning or a deep learning model. Based on the requirements we need to apply various operations to preprocess the data.

Tokenizing is the most basic and first thing you can do on text data. Tokenizing is the process of breaking the whole text into small parts like words.

Here we iterate through the patterns and tokenize the sentence using nltk.word_tokenize() function and append each word in the words list. We also create a list of classes for our tags.

for intent in intents['intents']:

for pattern in intent['patterns']:

#tokenize each word

w = nltk.word_tokenize(pattern)

words.extend(w)

#add documents in the corpus

documents.append((w, intent['tag']))

# add to our classes list

if intent['tag'] not in classes:

classes.append(intent['tag'])Now we will lemmatize each word and remove duplicate words from the list. Lemmatizing is the process of converting a word into its lemma form and then creating a pickle file to store the Python objects which we will use while predicting.

# lemmatize, lower each word and remove duplicates

words = [lemmatizer.lemmatize(w.lower()) for w in words if w not in ignore_words]

words = sorted(list(set(words)))

# sort classes

classes = sorted(list(set(classes)))

# documents = combination between patterns and intents

print (len(documents), "documents")

# classes = intents

print (len(classes), "classes", classes)

# words = all words, vocabulary

print (len(words), "unique lemmatized words", words)

pickle.dump(words,open('words.pkl','wb'))

pickle.dump(classes,open('classes.pkl','wb'))3. Create training and testing data

Now, we will create the training data in which we will provide the input and the output. Our input will be the pattern and output will be the class our input pattern belongs to. But the computer doesn’t understand text so we will convert text into numbers.

# create our training data

training = []

# create an empty array for our output

output_empty = [0] * len(classes)

# training set, bag of words for each sentence

for doc in documents:

# initialize our bag of words

bag = []

# list of tokenized words for the pattern

pattern_words = doc[0]

# lemmatize each word - create base word, in attempt to represent related words

pattern_words = [lemmatizer.lemmatize(word.lower()) for word in pattern_words]

# create our bag of words array with 1, if word match found in current pattern

for w in words:

bag.append(1) if w in pattern_words else bag.append(0)

# output is a '0' for each tag and '1' for current tag (for each pattern)

output_row = list(output_empty)

output_row[classes.index(doc[1])] = 1

training.append([bag, output_row])

# shuffle our features and turn into np.array

random.shuffle(training)

training = np.array(training)

# create train and test lists. X - patterns, Y - intents

train_x = list(training[:,0])

train_y = list(training[:,1])

print("Training data created")4. Build the model

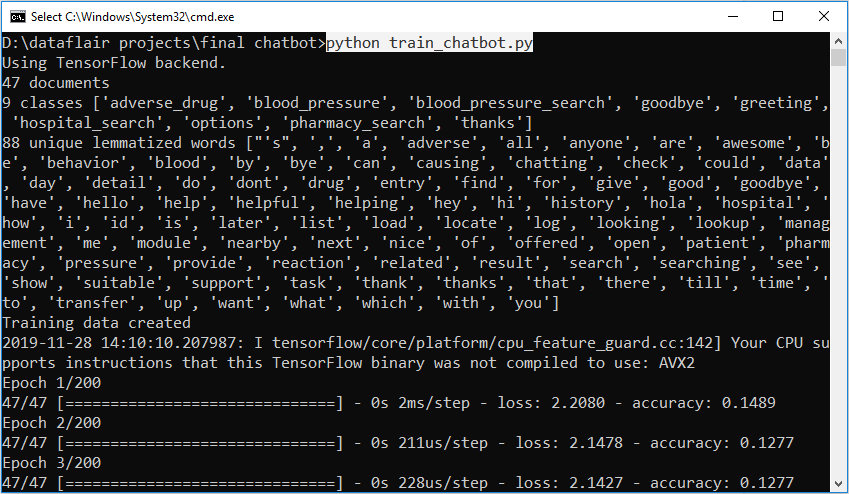

We have our training data ready, now we will build a deep neural network that has 3 layers. We use the Keras sequential API for this. After training the model for 200 epochs, we achieved 100% accuracy on our model. Let us save the model as ‘chatbot_model.h5’.

# Create model - 3 layers. First layer 128 neurons, second layer 64 neurons and 3rd output layer contains number of neurons

# equal to number of intents to predict output intent with softmax

model = Sequential()

model.add(Dense(128, input_shape=(len(train_x[0]),), activation='relu'))

model.add(Dropout(0.5))

model.add(Dense(64, activation='relu'))

model.add(Dropout(0.5))

model.add(Dense(len(train_y[0]), activation='softmax'))

# Compile model. Stochastic gradient descent with Nesterov accelerated gradient gives good results for this model

sgd = SGD(lr=0.01, decay=1e-6, momentum=0.9, nesterov=True)

model.compile(loss='categorical_crossentropy', optimizer=sgd, metrics=['accuracy'])

#fitting and saving the model

hist = model.fit(np.array(train_x), np.array(train_y), epochs=200, batch_size=5, verbose=1)

model.save('chatbot_model.h5', hist)

print("model created")5. Predict the response (Graphical User Interface)

To predict the sentences and get a response from the user to let us create a new file ‘chatapp.py’.

We will load the trained model and then use a graphical user interface that will predict the response from the bot. The model will only tell us the class it belongs to, so we will implement some functions which will identify the class and then retrieve us a random response from the list of responses.

Again we import the necessary packages and load the ‘words.pkl’ and ‘classes.pkl’ pickle files which we have created when we trained our model:

import nltk

from nltk.stem import WordNetLemmatizer

lemmatizer = WordNetLemmatizer()

import pickle

import numpy as np

from keras.models import load_model

model = load_model('chatbot_model.h5')

import json

import random

intents = json.loads(open('intents.json').read())

words = pickle.load(open('words.pkl','rb'))

classes = pickle.load(open('classes.pkl','rb'))To predict the class, we will need to provide input in the same way as we did while training. So we will create some functions that will perform text preprocessing and then predict the class.

def clean_up_sentence(sentence):

# tokenize the pattern - split words into array

sentence_words = nltk.word_tokenize(sentence)

# stem each word - create short form for word

sentence_words = [lemmatizer.lemmatize(word.lower()) for word in sentence_words]

return sentence_words

# return bag of words array: 0 or 1 for each word in the bag that exists in the sentence

def bow(sentence, words, show_details=True):

# tokenize the pattern

sentence_words = clean_up_sentence(sentence)

# bag of words - matrix of N words, vocabulary matrix

bag = [0]*len(words)

for s in sentence_words:

for i,w in enumerate(words):

if w == s:

# assign 1 if current word is in the vocabulary position

bag[i] = 1

if show_details:

print ("found in bag: %s" % w)

return(np.array(bag))

def predict_class(sentence, model):

# filter out predictions below a threshold

p = bow(sentence, words,show_details=False)

res = model.predict(np.array([p]))[0]

ERROR_THRESHOLD = 0.25

results = [[i,r] for i,r in enumerate(res) if r>ERROR_THRESHOLD]

# sort by strength of probability

results.sort(key=lambda x: x[1], reverse=True)

return_list = []

for r in results:

return_list.append({"intent": classes[r[0]], "probability": str(r[1])})

return return_listAfter predicting the class, we will get a random response from the list of intents.

def getResponse(ints, intents_json):

tag = ints[0]['intent']

list_of_intents = intents_json['intents']

for i in list_of_intents:

if(i['tag']== tag):

result = random.choice(i['responses'])

break

return result

def chatbot_response(text):

ints = predict_class(text, model)

res = getResponse(ints, intents)

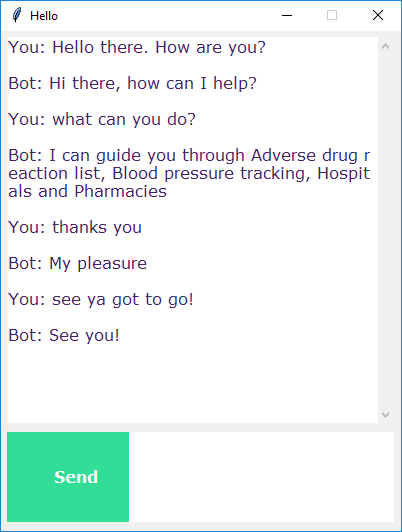

return resNow we will develop a graphical user interface. Let’s use Tkinter library which is shipped with tons of useful libraries for GUI. We will take the input message from the user and then use the helper functions we have created to get the response from the bot and display it on the GUI. Here is the full source code for the GUI.

#Creating GUI with tkinter

import tkinter

from tkinter import *

def send():

msg = EntryBox.get("1.0",'end-1c').strip()

EntryBox.delete("0.0",END)

if msg != '':

ChatLog.config(state=NORMAL)

ChatLog.insert(END, "You: " + msg + '\n\n')

ChatLog.config(foreground="#442265", font=("Verdana", 12 ))

res = chatbot_response(msg)

ChatLog.insert(END, "Bot: " + res + '\n\n')

ChatLog.config(state=DISABLED)

ChatLog.yview(END)

base = Tk()

base.title("Hello")

base.geometry("400x500")

base.resizable(width=FALSE, height=FALSE)

#Create Chat window

ChatLog = Text(base, bd=0, bg="white", height="8", width="50", font="Arial",)

ChatLog.config(state=DISABLED)

#Bind scrollbar to Chat window

scrollbar = Scrollbar(base, command=ChatLog.yview, cursor="heart")

ChatLog['yscrollcommand'] = scrollbar.set

#Create Button to send message

SendButton = Button(base, font=("Verdana",12,'bold'), text="Send", width="12", height=5,

bd=0, bg="#32de97", activebackground="#3c9d9b",fg='#ffffff',

command= send )

#Create the box to enter message

EntryBox = Text(base, bd=0, bg="white",width="29", height="5", font="Arial")

#EntryBox.bind("<Return>", send)

#Place all components on the screen

scrollbar.place(x=376,y=6, height=386)

ChatLog.place(x=6,y=6, height=386, width=370)

EntryBox.place(x=128, y=401, height=90, width=265)

SendButton.place(x=6, y=401, height=90)

base.mainloop()6. Run the chatbot

To run the chatbot, we have two main files; train_chatbot.py and chatapp.py.

First, we train the model using the command in the terminal:

python train_chatbot.py

If we don’t see any error during training, we have successfully created the model. Then to run the app, we run the second file.

python chatgui.py

The program will open up a GUI window within a few seconds. With the GUI you can easily chat with the bot.

Screenshots:

Summary

In this Python data science project, we understood about chatbots and implemented a deep learning version of a chatbot in Python which is accurate. You can customize the data according to business requirements and train the chatbot with great accuracy. Chatbots are used everywhere and all businesses are looking forward to implementing bot in their workflow.

I hope you will practice by customizing your own chatbot using Python and don’t forget to show us your work. And, if you found the article useful, do share the project with your friends and colleagues.

Did we exceed your expectations?

If Yes, share your valuable feedback on Google

hello ,

thank you this program.

I am actually getting error in this part of code

training = []

# create an empty array for our output

output_empty = [0] * len(classes)

# training set, bag of words for each sentence

for doc in documents:

# initialize our bag of words

bag = []

# list of tokenized words for the pattern

pattern_words = doc[0]

# lemmatize each word – create base word, in attempt to represent related words

pattern_words = [lemmatizer.lemmatize(word.lower()) for word in pattern_words]

# create our bag of words array with 1, if word match found in current pattern

for w in words:

bag.append(1) if w in pattern_words else bag.append(0)

# output is a ‘0’ for each tag and ‘1’ for current tag (for each pattern)

output_row = list(output_empty)

output_row[classes.index(doc[1])] = 1

training.append([bag, output_row])

# shuffle our features and turn into np.array

random.shuffle(training)

training = np.array(training)

# create train and test lists. X – patterns, Y – intents

train_x = list(training[:,0])

train_y = list(training[:,1])

print(“Training data created”)

error:

Traceback (most recent call last):

File “train_chatbot.py”, line 94, in

training.append(bag)

AttributeError: ‘numpy.ndarray’ object has no attribute ‘append’

could you help?

Please use numpy.concatenate(list1 , list2) or numpy.append().

how to add hyperlinks to the msgs?

You can add hyperlinks in the json response.

Hey, awesome project. I want to add hyperlinks and pictures in the chatbot response. Can you guide me through it?

You can add hyperlinks in the json response but not images

Hi, I want to add images and hyperlinks in the chatbot responses. Can you guide me through it?

Yes, You can add hyperlinks in the json response but not images

I’m getting an error

OSError: [winError 193] %1 is not a valid win32 application

How to solve this?

The error tells that the file is not an executable file. You need to specify the executable path to execute. In case if the issue persists, please download fresh setups, install them and start again from the scratch.

is it possible to save chatlog conversations

Yes, you can save the chatlog conversations in database. Please connect to a database, now write the incoming and outgoing messages with a timestamp.

As mentioned earlier by Sid, I am also always getting the output from the last reponse of json file.

I got no error but the GUI input window is not taking any inputs from the user. What to do?

Please post the error message and complete stacktrace, we will look into the issue.

Please compare your code with the code provided in the article. Also, check whether tkinter is installed properly

can anyone help me

Exception in Tkinter callback

Traceback (most recent call last):

File “C:\Users\SURYAPRAKASH\Anaconda3\lib\tkinter\__init__.py”, line 1705, in __call__

return self.func(*args)

File “”, line 11, in send

res = chatbot_response(msg)

File “”, line 43, in chatbot_response

res = getResponse(ints, data)

File “”, line 34, in getResponse

tag =ints[1][‘intent’]

IndexError: list index out of range

In [ ]:

The statement will obviously give you an error as you have mentioned this :- tag = ints[1][‘intent’]. As per code execution, the “ints” variable is responsible to hold a list returned from the function called “predict_class”. This “predict_class” function returns list depending on the input the user gives, so when “tag” get the “ints” values, it’s not necessary that it will get multiple lists, but for sure it will get one. So rather mentioning “ints[1][‘intent’]” mention as “ints[0][‘intent’]” which will get executed properly.

i’m getting this error

Exception in Tkinter callback

Traceback (most recent call last):

File “C:\Users\SURYAPRAKASH\Anaconda3\lib\tkinter\__init__.py”, line 1705, in __call__

return self.func(*args)

File “”, line 11, in send

res = chatbot_response(msg)

File “”, line 38, in chatbot_response

res=getresponse(ints,data)

File “”, line 27, in getresponse

tag=ints[0][‘intent’]

IndexError: list index out of range

I am getting an error on base = TK()

I am using colab

base = Tk()

File “/usr/lib/python3.6/tkinter/__init__.py”, line 2023, in __init__

self.tk = _tkinter.create(screenName, baseName, className, interactive, wantobjects, useTk, sync, use)

_tkinter.TclError: couldn’t connect to display “:0.0”

Even I’m getting the same error.did you find the solution?

You can use Tkinter on your laptop or desktop not in Google Colab.

You can use Tkinter on your laptop or desktop not in Google Colab.

In linux, i had to add:

nltk.download(‘punkt’)

nltk.download(‘wordnet’)

For execute the training file.

From the topic pre-processing data, i couldn’t understand which file we were going to deal with. If we just have to download the files and zip into a folder and run, where is the tutorial part? What is the input of the student other than editing the train data? I came with a lot of expectations, and since i couldn’t make the chatbot and couldn’t find any place where i could clarify doubts, thought at least such a feedback might help future learners.

The purpose of giving a zip file is to test the code set while you are trying to create one of yours. It is meant to be a guide; also, you have a detailed explanation of the code set with screenshots. The explanation helps you to build a chatbot by yourself.

The explanation is enough to get your idea clear on how to create a chatbot.

If we discuss the training process, in this section, we try to give a rough idea about how to get your model trained and from what kind of data. Next time, if any person tries to create their model, they will at least know how to start.

Hi, why s it always giving the response from last intent of json file.

Please provide the fix

there is an independent issue with the code if you copy paste it.

it should be

“`for doc in documents:

# initialize our bag of words

bag = []

# list of tokenized words for the pattern

pattern_words = doc[0]

# lemmatize each word – create base word, in attempt to represent related words

pattern_words = [lemmatizer.lemmatize(

word.lower()) for word in pattern_words]

# create our bag of words array with 1, if word match found in current pattern

for w in words:

bag.append(1) if w in pattern_words else bag.append(0)

# output is a ‘0’ for each tag and ‘1’ for current tag (for each pattern)

output_row = list(output_empty)

output_row[classes.index(doc[1])] = 1

training.append([bag, output_row])“`

Hi, why is it always giving the response from last intent of json file?

Please provide the fix

Guess you might be sending some kind of text which gets max probability match with the last intent.

If in case the issue persists, use a large volume of dataset and increase the error threshold.

I hope rather than copying the code from the article you are using the code from the download link

wow wonderful its working for me

can u please share the code

Hey mine has: NameError: name ‘msg’ is not defined

do you know how to fix it?

I faced the same error. I indented the — if msg!=”: — line as follows, and it worked fine.

def send():

msg = EntryBox.get(“1.0”,’end-1c’).strip()

EntryBox.delete(“0.0″,END)

if msg != ”:

——-

——–

It was an indentation issue, we have updated code in the article, please refer.

I faced the same error. I indented the — if msg!=”: — line as follows, and it worked fine.

def send():

msg = EntryBox.get(“1.0”,’end-1c’).strip()

EntryBox.delete(“0.0″,END)

if msg != ”:

——-

——–

Can you share what version are you using of tensorflow, nltk, kelas, pickel? And please mention your Python version also

We have used the following version:

Python = 3.8.5

Tensorflow = 2.4.1

NLTK = 3.5

Keras = 2.4.3

pickleshare = 0.7.5

I’ve fixed the package issue after which training model completed, but when I use the application it only gives response as “Please provide hospital name or location” for every query. Any reasons ?

Usually, Chatbots respond to the associated patterns and can’t go beyond the pattern.

It can be fixed by either training the model with a large dataset or by increasing the error threshold for example 0.75 then give an if-else condition when we get an empty result list. Finally use try-except to get response for any user input that doesn’t match the pattern and the bot replies “I don’t understand”.

# create our training data

training = []

# create an empty array for our output

output_empty = [0] * len(classes)

# training set, bag of words for each sentence

for doc in documents:

# initialize our bag of words

bag = []

# list of tokenized words for the pattern

pattern_words = doc[0]

# lemmatize each word – create base word, in attempt to represent related words

pattern_words = [lemmatizer.lemmatize(word.lower()) for word in pattern_words]

# create our bag of words array with 1, if word match found in current pattern

for w in words:

bag.append(1) if w in pattern_words else bag.append(0)

# output is a ‘0’ for each tag and ‘1’ for current tag (for each pattern)

output_row = list(output_empty)

output_row[classes.index(doc[1])] = 1

training.append([bag, output_row])

# shuffle our features and turn into np.array

random.shuffle(training)

training = np.array(training)

# create train and test lists. X – patterns, Y – intents

train_x = list(training[:,0])

train_y = list(training[:,1])

print(“Training data created”)

There is an indentation issue in this particular part of the code. train_x variable always getting last bag value due to this issue. The author gave the complete code “Python Chatbot Project Dataset” at the top of this blog. You’ll face the issue if you copy and paste the code from here.

Although we have corrected the indentation issue, but it is recommended to download the code from “Download Chatbot Code & Dataset” section

Can anyone please help with this error

Exception in Tkinter callback

Traceback (most recent call last):

ValueError: Error when checking input: expected dense_1_input to have shape (194,) but got array with shape (88,)

On modifying the intent file, it is now picking the last response only..

I hope rather than copying the code from the article you are using the code from the download link

You might be sending some kind of text which gets max probability match with the last intent.

If in case the issue persists, use a large volume of dataset and increase the error threshold.

Thank you for this! its running well. please help me understand how you created classes. id like to load a different data file and customize the bot

Classes are basically all those different tags mentioned for each message sent by the user. In order to make your training model, you have to create tags of different types which includes patterns and responses like the previous one, and finally train it.

Try training.append([bag]) not training.append(bag)

Hi,

I am getting an issue while training the chatbot. Following is the error line:

2020-05-29 12:00:03.762654: W tensorflow/stream_executor/platform/default/dso_loader.cc:55] Could not load dynamic library ‘cudart64_101.dll’; dlerror: cudart64_101.dll not found

2020-05-29 12:00:03.769838: I tensorflow/stream_executor/cuda/cudart_stub.cc:29] Ignore above cudart dlerror if you do not have a GPU set up on your machine.

Can anyone help with this?

Don’t worry, actually it’s not an error, it’s just a warning given by tensorflow. When tensorflow library could not find the dependent library files (.dll) in the system.

The mentioned dll file is for the NVIDEA GPU ie. nvidea graphic card;

what is the compiler you are using?

I am not able to run the file in either python idle or the google collab I am getting some errors in the train_chatbot.py

I have already installed the tensor flow module in idle even though it is showing me that the module is not found.

Error in the IDLE:

Using TensorFlow backend.

Traceback (most recent call last):

File “C:\Users\admin\Documents\Kaushik\Projects\Chat Bot\train_chatbot.py”, line 8, in

from keras.models import Sequential

File “C:\Apps\Python\lib\site-packages\keras\__init__.py”, line 3, in

from . import utils

File “C:\Apps\Python\lib\site-packages\keras\utils\__init__.py”, line 6, in

from . import conv_utils

File “C:\Apps\Python\lib\site-packages\keras\utils\conv_utils.py”, line 9, in

from .. import backend as K

File “C:\Apps\Python\lib\site-packages\keras\backend\__init__.py”, line 1, in

from .load_backend import epsilon

File “C:\Apps\Python\lib\site-packages\keras\backend\load_backend.py”, line 90, in

from .tensorflow_backend import *

File “C:\Apps\Python\lib\site-packages\keras\backend\tensorflow_backend.py”, line 5, in

import tensorflow as tf

ModuleNotFoundError: No module named ‘tensorflow’

For google collab:

—————————————————————————

FileNotFoundError Traceback (most recent call last)

in ()

15 documents = []

16 ignore_words = [‘?’, ‘!’]

—> 17 data_file = open(‘intents.json’).read()

18 intents = json.loads(data_file)

19

FileNotFoundError: [Errno 2] No such file or directory: ‘intents.json’

Could you please help me with this, I am in very need of it.

Thanking you,

Hoping that you will reply me.

The very first thing is to check if the TensorFlow has been installed properly or not, check with conda list; also please use the following version of libraries: Python = 3.8.5, Tensorflow = 2.4.1, NLTK = 3.5, Keras = 2.4.3, pickleshare = 0.7.5

Talking about getting error no file found in colab:

For this issue, you need to add intent file in your google drive, after that, you have to request google drive to get access directly to colab. Once the authentication process is done, then you can access the files from drive to colab.

is it possible to combine this with a word embedding layer so that synonyms could be used to trigger intents?

Hi, such an amazing code for chat-bot. Can you please help me that how can I make delay in bot response. Actually I’ve tried a lot with time sleep.but it’s delaying sending the message of user and I want delay in bot’s reply. Could you please help ASAP.

Hi Sadhana did you find any solutions for this?

You can use the Tkinter after() method rather than time.sleep().

where do i have to insert it? do i have to seperate input and answer for this? thx

How can we use this chatbot on messaging apps like whatsapp,facebook.

To use python chatbot project on Facebook or WhatsApp, You need below three steps:

1. Using Flask create a server. This server will listen messages from FB

2. Develop a function which will send messages back to users, the same can be done with requests

3. Using ngrok forward https connection to the local machine