What is XGBoost Algorithm – Applied Machine Learning

Free Machine Learning courses with 130+ real-time projects Start Now!!

1. XGBoost Algorithm – Objective

In this Machine Learning Tutorial, we will learn Introduction to XGBoost, coding of XGBoost Algorithm, an Advanced functionality of XGboost Algorithm, General Parameters, Booster Parameters, Linear Booster Specific Parameters, Learning Task Parameters. Furthermore, we will study building models and parameters of XGBoost.

So, let’s start the XGBoost Algorithm Tutorial.

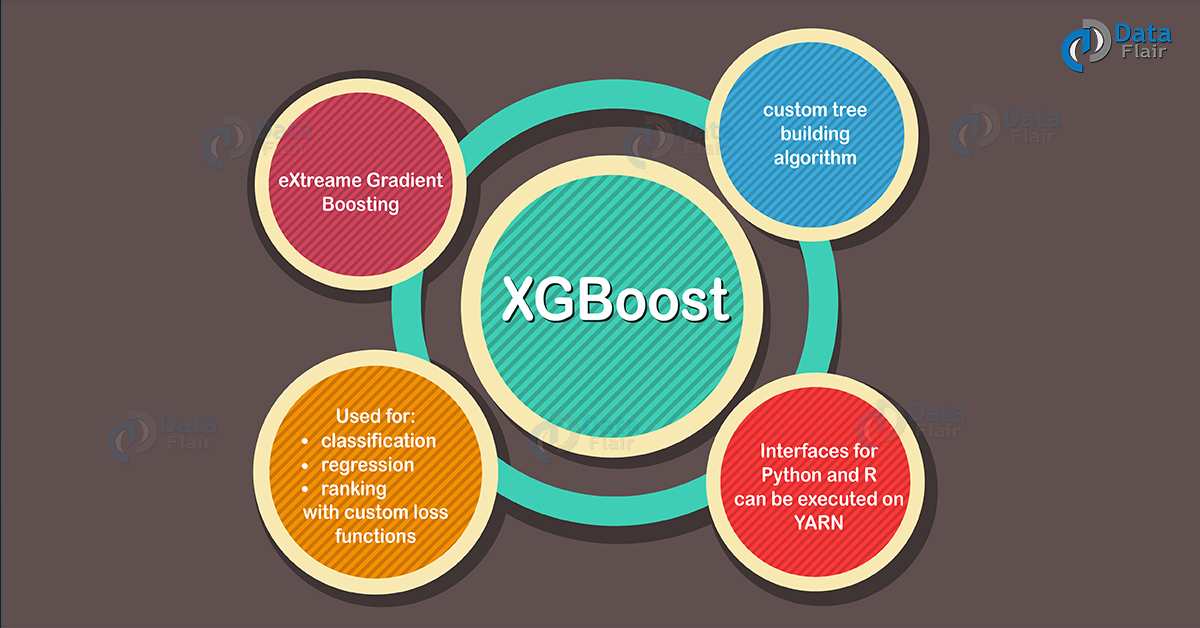

2. Introduction to XGBoost Algorithm

3. XGBoost Algorithm working With Main Interfaces

- C++, Java and JVM languages.

- Julia.

- Command Line Interface.

- Python interface along with integrated model in scikit-learn.

- R interface as well as a model in the caret package.

Read more about Machine Learning Algorithms

4. Preparation of Data for using XGBoost Algorithm

5. Building Model – Xgboost AlgorithmR

Step 1: Load all the libraries

Step 2 : Load the dataset

Step 3: Data Cleaning & Feature Engineering

Step 4: Tune and Run the model

6. Xgboost Algorithm – Parameters

a. General Parameters

Following are the General parameters used in Xgboost Algorithm:

- silent: The default value is 0. You need to specify 0 for printing running messages, 1 for silent mode.

- booster: The default value is gbtree. You need to specify the booster to use: gbtree (tree based) or gblinear (linear function).

- num_pbuffer: This is set automatically by xgboost Algorithm, no need to be set by a user. Read the documentation of xgboost for more details.

- num_feature: This is set automatically by xgboost Algorithm, no need to be set by a user.

b. Booster Parameters

- eta: The default value is set to 0.3. You need to specify step size shrinkage used in an update to prevents overfitting. After each boosting step, we can directly get the weights of new features. eta actually shrinks the feature weights to make the boosting process more conservative. The range is 0 to 1. Low eta value means the model is more robust to overfitting.

- gamma: The default value is set to 0. You need to specify minimum loss reduction required to make a further partition on a leaf node of the tree. The larger, the more conservative the algorithm will be. The range is 0 to ∞. Larger the gamma more conservative the algorithm is.

- max_depth: The default value is set to 6. You need to specify the maximum depth of a tree. The range is 1 to ∞.

- min_child_weight: The default value is set to 1. You need to specify the minimum sum of instance weight(hessian) needed in a child. If the tree partition step results in a leaf node. Then with the sum of instance weight less than min_child_weight. Then the building process will give up further partitioning. In linear regression mode, corresponds to a minimum number of instances needed to be in each node. The larger, the more conservative the algorithm will be. The range is 0 to ∞.

- max_delta_step: The default value is set to 0. Maximum delta step we allow each tree’s weight estimation to be. If the value is set to 0, it means there is no constraint. If it is set to a positive value, it can help make the update step more conservative. Usually, this parameter is not needed, but it might help in logistic regression. Especially, when a class is extremely imbalanced. Set it to a value of 1-10 might help control the update.The range is 0 to ∞.

- subsample: The default value is set to 1. You need to specify the subsample ratio of the training instance. Setting it to 0.5 means that XGBoost randomly collected half of the data instances. That needs to grow trees and this will prevent overfitting. The range is 0 to 1.

- colsample_bytree: The default value is set to 1. You need to specify the subsample ratio of columns when constructing each tree. The range is 0 to 1.

c. Linear Booster Specific Parameters

These are Linear Booster Specific Parameters in XGBoost Algorithm.

- lambda and alpha: These are regularization term on weights. Lambda default value assumed is 1 and alpha are 0.

- lambda_bias: L2 regularization term on bias and has a default value of 0.

d. Learning Task Parameters

Following are the Learning Task Parameters in XGBoost Algorithm

- base_score: The default value is set to 0.5. You need to specify the initial prediction score of all instances, global bias.

- objective: The default value is set to reg:linear. You need to specify the type of learner you want. That includes linear regression, Poisson regression etc.

- eval_metric: You need to specify the evaluation metrics for validation data. And a default metric will be assigned according to the objective.

- seed: As always here you specify the seed to reproduce the same set of outputs.

Read about Advantages & Disadvantages of Machine Learning

7. Advanced functionality of XGBoost Algorithm

8. Conclusion

If you are Happy with DataFlair, do not forget to make us happy with your positive feedback on Google