Artificial Neural Networks for Machine Learning – Every aspect you need to know about

Free Machine Learning courses with 130+ real-time projects Start Now!!

Have you ever wondered how our brain works? There are chances you read about it in your school days. ANN is exactly similar to the neurons work in our nervous system. If you can’t recall it, no worries here is the best tutorial by DataFlair to explain artificial neural networks with examples and real-time applications. So, let’s start the Artificial Neural Networks Tutorial.

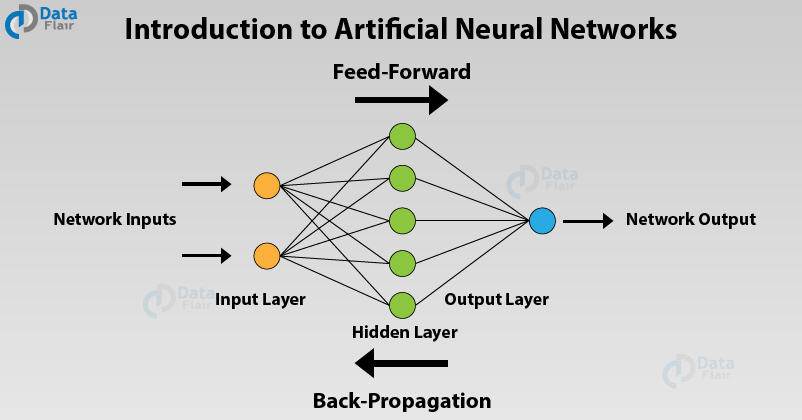

Introduction to Artificial Neural Networks

Artificial Neural Networks are the most popular machine learning algorithms today. The invention of these Neural Networks took place in the 1970s but they have achieved huge popularity due to the recent increase in computation power because of which they are now virtually everywhere. In every application that you use, Neural Networks power the intelligent interface that keeps you engaged.

Before moving on, I recommend you to revise your machine learning concepts.

What is ANN?

Artificial Neural Networks are a special type of machine learning algorithms that are modeled after the human brain. That is, just like how the neurons in our nervous system are able to learn from the past data, similarly, the ANN is able to learn from the data and provide responses in the form of predictions or classifications.

ANNs are nonlinear statistical models which display a complex relationship between the inputs and outputs to discover a new pattern. A variety of tasks such as image recognition, speech recognition, machine translation as well as medical diagnosis makes use of these artificial neural networks.

An important advantage of ANN is the fact that it learns from the example data sets. Most commonly usage of ANN is that of a random function approximation. With these types of tools, one can have a cost-effective method of arriving at the solutions that define the distribution. ANN is also capable of taking sample data rather than the entire dataset to provide the output result. With ANNs, one can enhance existing data analysis techniques owing to their advanced predictive capabilities.

Don’t forget to check DataFlair’s leading blog on Recurrent Neural Networks.

Artificial Neural Networks Architecture

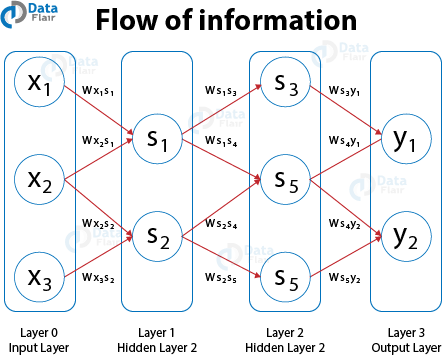

The functioning of the Artificial Neural Networks is similar to the way neurons work in our nervous system. The Neural Networks go back to the early 1970s when Warren S McCulloch and Walter Pitts coined this term. In order to understand the workings of ANNs, let us first understand how it is structured. In a neural network, there are three essential layers –

Input Layers

The input layer is the first layer of an ANN that receives the input information in the form of various texts, numbers, audio files, image pixels, etc.

Hidden Layers

In the middle of the ANN model are the hidden layers. There can be a single hidden layer, as in the case of a perceptron or multiple hidden layers. These hidden layers perform various types of mathematical computation on the input data and recognize the patterns that are part of.

Output Layer

In the output layer, we obtain the result that we obtain through rigorous computations performed by the middle layer.

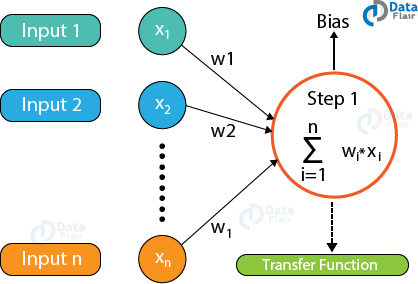

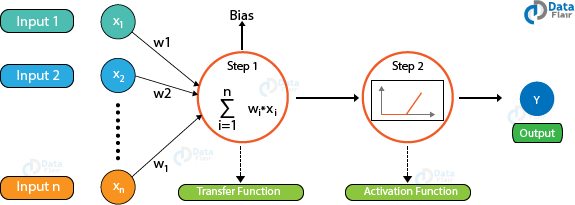

In a neural network, there are multiple parameters and hyperparameters that affect the performance of the model. The output of ANNs is mostly dependent on these parameters. Some of these parameters are weights, biases, learning rate, batch size etc. Each node in the ANN has some weight.

Each node in the network has some weights assigned to it. A transfer function is used for calculating the weighted sum of the inputs and the bias.

After the transfer function has calculated the sum, the activation function obtains the result. Based on the output received, the activation functions fire the appropriate result from the node. For example, if the output received is above 0.5, the activation function fires a 1 otherwise it remains 0.

Some of the popular activation functions used in Artificial Neural Networks are Sigmoid, RELU, Softmax, tanh etc.

Based on the value that the node has fired, we obtain the final output. Then, using the error functions, we calculate the discrepancies between the predicted output and resulting output and adjust the weights of the neural network through a process known as backpropagation.

ANNs are part of an emerging area in Machine Learning known as Deep Learning.

Many people are confused between Deep Learning and Machine Learning. Are you among one of them? Check this easy to understand article on Deep Learning vs Machine Learning.

Back Propagation in Artificial Neural Networks

In order to train a neural network, we provide it with examples of input-output mappings. Finally, when the neural network completes the training, we test the neural network where we do not provide it with these mappings. The neural network predicts the output and we evaluate how correct the output is using the various error functions. Finally, based on the result, the model adjusts the weights of the neural networks to optimize the network following gradient descent through the chain rule.

Types of Artificial Neural Networks

There are two important types of Artificial Neural Networks –

- FeedForward Neural Network

- FeedBack Neural Network

FeedForward Artificial Neural Networks

In the feedforward ANNs, the flow of information takes place only in one direction. That is, the flow of information is from the input layer to the hidden layer and finally to the output. There are no feedback loops present in this neural network. These type of neural networks are mostly used in supervised learning for instances such as classification, image recognition etc. We use them in cases where the data is not sequential in nature.

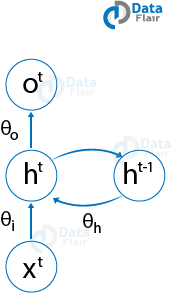

Feedback Artificial Neural Networks

In the feedback ANNs, the feedback loops are a part of it. Such type of neural networks are mainly for memory retention such as in the case of recurrent neural networks. These types of networks are most suited for areas where the data is sequential or time-dependent.

Do you know how Convolutional Neural Networks work?

Bayesian Networks

These type of neural networks have a probabilistic graphical model that makes use of Bayesian Inference for computing the probability. These type of Bayesian Networks are also known as Belief Networks. In these Bayesian Networks, there are edges that connect the nodes representing the probabilistic dependencies present among these type of random variables. The direction of effect is such that if one node is affecting the other then they fall in the same line of effect. Probability associated with each node quantifies the strength of the relationship. Based on the relationship, one is able to infer from the random variables in the graph with the help of various factors.

The only constraint that these networks have to follow is it cannot return to the node through the directed arcs. Therefore, Bayesian Networks are referred to as Directed Acyclic Graphs (DAGs).

These Bayesian Networks can handle the multivalued variables and they comprise of two dimensions –

- Range of Prepositions

- Probability that each preposition has been assigned with.

Assume that there is a finite set of random variables such that each variable of the finite set is denoted by X = {x1, x2… xn} where each variable X takes from the values present in the finite set such that Value{x1}. If there is a directed link from the variable Xi to the variable Xj, then Xi will be the parent of Xj that shows the direct dependencies between these variables.

With the help of Bayesian Networks, one can combine the prior knowledge as well as the observed data. Bayesian Networks are mainly for learning the causal relationships and also understanding the domain knowledge to predict the future event. This takes place even in the case of missing data.

Artificial Neural Networks Applications

Following are the important Artificial Neural Networks applications –

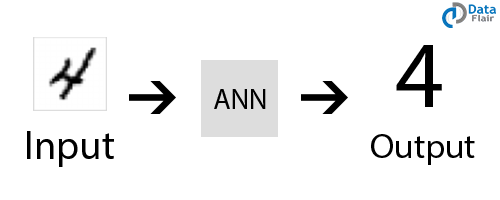

Handwritten Character Recognition

ANNs are used for handwritten character recognition. Neural Networks are trained to recognize the handwritten characters which can be in the form of letters or digits.

Speech Recognition

ANNs play an important role in speech recognition. The earlier models of Speech Recognition were based on statistical models like Hidden Markov Models. With the advent of deep learning, various types of neural networks are the absolute choice for obtaining an accurate classification.

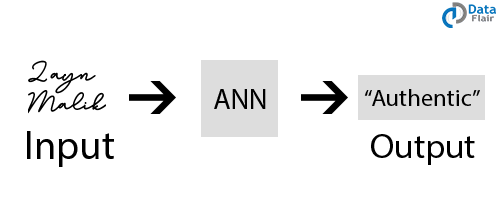

Signature Classification

For recognizing signatures and categorizing them to the person’s class, we use artificial neural networks for building these systems for authentication. Furthermore, neural networks can also classify if the signature is fake or not.

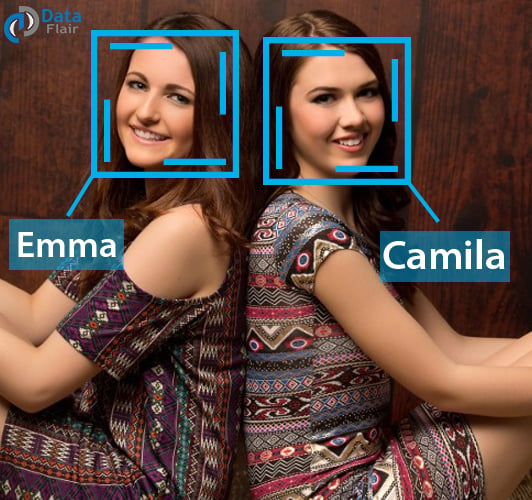

Facial Recognition

In order to recognize the faces based on the identity of the person, we make use of neural networks. They are most commonly used in areas where the users require security access. Convolutional Neural Networks are the most popular type of ANN used in this field.

Wait! it is the time to implement your machine learning knowledge with this awesome Credit Card Fraud Detection Project

Summary

So, you saw the use of artificial neural networks through different applications. Hope DataFlair proves best in explaining you the introduction to artificial neural networks. Also, we added several examples of ANN in between the blog so that you can relate the concept of neural networks easily. We studied how neural networks are able to predict accurately using the process of backpropagation. We also went through the Bayesian Networks and finally, we overviewed the various applications of ANNs.

Did you enjoy reading this article? Please share your feedback through the comments.

Did you like this article? If Yes, please give DataFlair 5 Stars on Google

what is the scope of research in machine learning field

Robust. Saved. Thanks!

Thanks for the feedback. We are glad that you liked the tutorial. Keep visiting DataFlair for regular updates of Data Science and Big Data world.

Can we get pdf

please can give me the reference of this subject

where can i get exam of this course