What are Recurrent Neural Networks? An Ultimate Guide for Newbies!

Free Machine Learning courses with 130+ real-time projects Start Now!!

Apple’s Siri and Amazon’s Alexa have one thing in common apart from being personal assistants – they both use Recurrent Neural Networks to understand human speech and generate replies. Not only this, almost every company is using Recurrent Neural Networks. And therefore to explain RNN in simple terms, DataFlair brings the latest article on Recurrent Neural Network by discussing it with data scientists and machine learning experts.

Here, we will discuss the most important type of machine learning algorithm – Recurrent Neural Network (RNN). These type of algorithms help you out the most when you translate one language into another. We will understand background, working and various applications of RNN. So, let’s start the tutorial with the basic introduction to Recurrent Neural Networks.

What are Recurrent Neural Networks?

A Recurrent Neural Network is a type of Neural Network where there exists a connection between the nodes along a temporal sequence. This connection is that of a directed graph. By temporal, we mean data that transitions with time.

RNN Example – time-series data involving prices of stock prices that change with time, sensor readings, medical records, etc. These recurrent neural networks use their internal state or memory to process the sequence of input data. Such input is dependent upon the previous input. Therefore, there is a connection between the input sequences. Therefore, we use them in areas like natural language processing and speech recognition.

Why RNN?

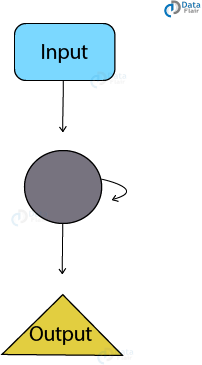

Traditional neural networks lack the ability to address future inputs based on the ones in the past. For example, a traditional neural network cannot predict the next word in the sequence based on the previous sequences. However, a recurrent neural network (RNN) most definitely can. Recurrent Neural networks, as the name suggests are recurring. Therefore, they execute in loops allowing the information to persist.

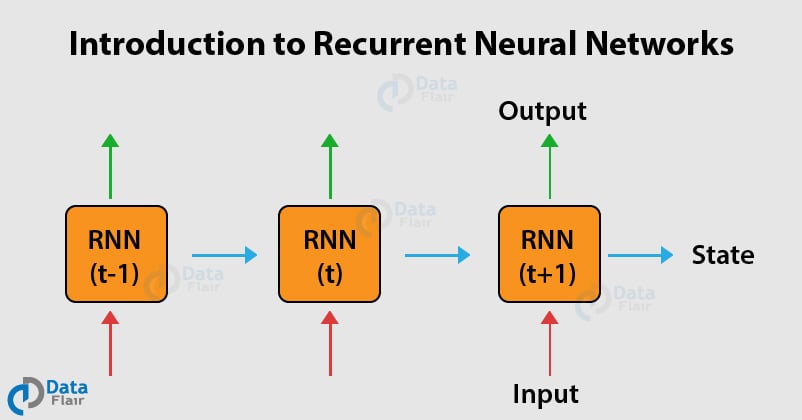

In the above diagram, we have a neural network that takes the input xt and gives use the output ht. Therefore, the information is passed from one step to the successive step. This recurrent neural network, when unfolded can be considered to be copies of the same network that passes information to the next state.

RNNs allow us to perform modeling over a sequence or a chain of vectors. These sequences can be either input, output or even both. Therefore, we can conclude that neural networks are related to lists or sequences. So, whenever you have data of sequential nature, you should apply recurrent neural networks.

Technology is evolving rapidly!

Stay updated with DataFlair on WhatsApp!!

Don’t forget to check our leading blog on Artificial Neural Networks

How do Recurrent Neural Networks Work?

In the traditional neural networks, there is a hidden layer with its own set of weights and biases. Let us assume this weight and bias to be w1 and b1 for weight and bias 1 respectively. Similarly, we will have w2,b2 and w3,b3 for the third layer. These layers are also independent of one another, meaning that they do not memorize the previous output. Suppose there is a deeper network with one input layer, three hidden layers, and one output layer.

The recurrent neural network will perform the following –

- The recurrent network first performs the conversion of independent activations into dependent ones. It also assigns the same weight and bias to all the layers which further reduces the complexity of RNN of parameters and provides a standard platform for memorization of the previous outputs by providing previous output as an input to the next layer.

- These three layers having the same weights and bias combine together into a single recurrent unit.

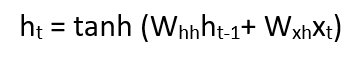

For calculating the current state –

ht – current state

ht-1 – previous state

xt – input state

In order to apply the activation function tanh, we have –

where:

whh -> weight of recurrent neuron and,

wxh -> weight of the input neuron

Now you can learn everything about Machine Learning for FREE – 90 + Free Machine Learning Tutorials Series

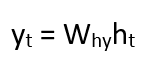

The formula for calculating output:

Yt -> output

Why -> weight at output layer

Training through RNN

- The network takes a single time-step of the input.

- We calculate the current state through the current input and the previous state.

- Now, the current state output ht becomes ht-1 for the next state.

- There can be n number of steps and in the end, all the information can be joined.

- After completion of all the steps, the final step is for calculating the output.

- Finally, we compute the error by calculating the difference between actual output and the predicted output.

- The error is backpropagated to the network to adjust the weights and produce a better outcome.

Applications of Recurrent Neural Networks

This is the most amazing part of our Recurrent Neural Networks Tutorial. Below are some of the stunning applications of RNN, have a look –

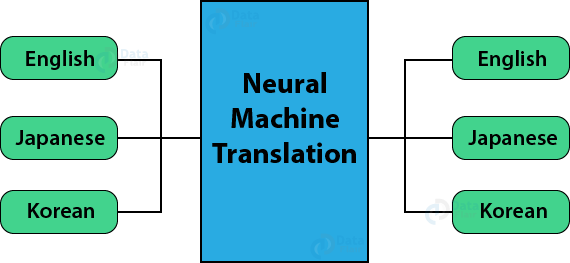

1. Machine Translation

We make use of Recurrent Neural Networks in the translation engines to translate the text from one language to the other. They can do this with the combination of other models like LSTMs.

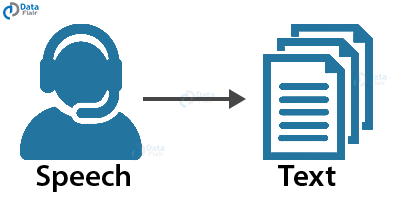

2. Speech Recognition

Recurrent Neural Networks have replaced the traditional speech recognition models that made use of Hidden Markov Models. These Recurrent Neural Networks along with LSTMs are better poised at classifying speeches and converting them into text without loss of context.

3. Automatic Image Tagger

RNNs in conjunction with Convolution Neural Networks can detect the images and provide their description in the form of tags. For example, an image of a fox jumping over the fence is better explained appropriately using RNNs.

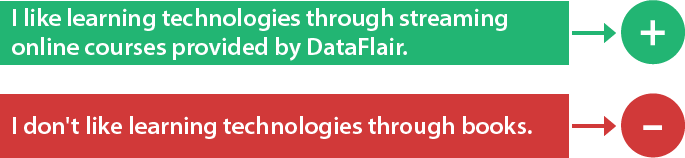

4. Sentiment Analysis

For understanding the sentiment of the user, we make use of sentiment analysis to mine positivity, negativity or the neutrality of the sentence. Therefore, RNNs are most adept at handling sequential data in order to find sentiments of the sentence.

Wait! You should check the Sentiment Analysis Project Now!! This will help you to refresh your machine learning concepts.

Summary

Concluding the Recurrent Neural Networks Tutorial, we saw applications of RNN and how they process sequential data. We also studied how we can combine RNN with other models for processing data more efficiently. Hope now you understand the complete concept of Recurrent Neural Networks. What next? Here is DataFlairs’s next article on – Top ANN Applications.

Your feedback is appreciable, please share it through comments.

Did you know we work 24x7 to provide you best tutorials

Please encourage us - write a review on Google

As a data science student, I got to learn many things from this post. The scope of data science, explained in this post, thrilled me to a great extent. I am confident that I can get a good job and keep updating my knowledge in this field, if I choose the right course from the right place. This post has given me all the information that I needed to know about data science. I cannot thank the writer enough for that.

360 DigiTMG Provides