Features of Apache Spark – Learn the benefits of using Spark

1. Objective

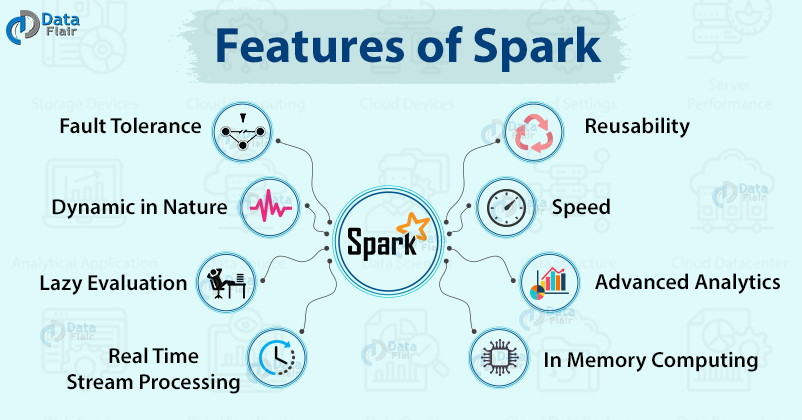

Apache Spark being an open-source framework for Bigdata has a various advantage over other big data solutions like Apache Spark is Dynamic in Nature, it supports in-memory Computation of RDDs. It provides a provision of reusability, Fault Tolerance, real-time stream processing and many more. In this tutorial on features of Apache Spark, we will discuss various advantages of Spark which give us the answer for – Why we should learn Apache Spark? Why is Spark better than Hadoop MapReduce and why is Spark called 3G of Big data?

2. Introduction to Apache Spark

Apache Spark is lightning fast, in-memory data processing engine. Spark mainly designs for data science and the abstractions of Spark make it easier. Apache Spark provides high-level APIs in Java, Scala, Python and R. It also has an optimized engine for general execution graph. In data processing, Apache Spark is the largest open source project. Follow this guide to learn How Apache Spark works in detail.

3. Features of Apache Spark

Let’s discuss sparkling features of Apache Spark:

a. Swift Processing

Using Apache Spark, we achieve a high data processing speed of about 100x faster in memory and 10x faster on the disk. This is made possible by reducing the number of read-write to disk.

b. Dynamic in Nature

We can easily develop a parallel application, as Spark provides 80 high-level operators.

c. In-Memory Computation in Spark

With in-memory processing, we can increase the processing speed. Here the data is being cached so we need not fetch data from the disk every time thus the time is saved. Spark has DAG execution engine which facilitates in-memory computation and acyclic data flow resulting in high speed.

d. Reusability

we can reuse the Spark code for batch-processing, join stream against historical data or run ad-hoc queries on stream state.

e. Fault Tolerance in Spark

Apache Spark provides fault tolerance through Spark abstraction-RDD. Spark RDDs are designed to handle the failure of any worker node in the cluster. Thus, it ensures that the loss of data reduces to zero. Learn different ways to create RDD in Apache Spark.

f. Real-Time Stream Processing

Technology is evolving rapidly!

Stay updated with DataFlair on WhatsApp!!

Spark has a provision for real-time stream processing. Earlier the problem with Hadoop MapReduce was that it can handle and process data which is already present, but not the real-time data. but with Spark Streaming we can solve this problem.

g. Lazy Evaluation in Apache Spark

All the transformations we make in Spark RDD are Lazy in nature, that is it does not give the result right away rather a new RDD is formed from the existing one. Thus, this increases the efficiency of the system. Follow this guide to learn more about Spark Lazy Evaluation in great detail.

h. Support Multiple Languages

In Spark, there is Support for multiple languages like Java, R, Scala, Python. Thus, it provides dynamicity and overcomes the limitation of Hadoop that it can build applications only in Java.

Get the best Scala Books To become an expert in Scala programming language.

i. Active, Progressive and Expanding Spark Community

Developers from over 50 companies were involved in making of Apache Spark. This project was initiated in the year 2009 and is still expanding and now there are about 250 developers who contributed to its expansion. It is the most important project of Apache Community.

j. Support for Sophisticated Analysis

Spark comes with dedicated tools for streaming data, interactive/declarative queries, machine learning which add-on to map and reduce.

k. Integrated with Hadoop

Spark can run independently and also on Hadoop YARN Cluster Manager and thus it can read existing Hadoop data. Thus, Spark is flexible.

l. Spark GraphX

Spark has GraphX, which is a component for graph and graph-parallel computation. It simplifies the graph analytics tasks by the collection of graph algorithm and builders.

m. Cost Efficient

Apache Spark is cost effective solution for Big data problem as in Hadoop large amount of storage and the large data center is required during replication.

4. Conclusion

In conclusion, Apache Spark is the most advanced and popular product of Apache Community that provides the provision to work with the streaming data, has various Machine learning library, can work on structured and unstructured data, deal with graph etc.

After learning Apache Spark features follow this guide to compare Apache Spark with Hadoop MapReduce.

See Also-

We work very hard to provide you quality material

Could you take 15 seconds and share your happy experience on Google

How it is cost efficient than, and spark does not replicate things neither map reduce, its HDFS that replicate things, so could you please explain how it is cost efficient than Hadoop.

Vivek Spark is using processing in memory where data can processed in Ram only while Map reduce uses Disk to dump the result & again use it in another Map reduce step. Ram processing is faster than Disk read.Hope you got it.