Map Only Job in Hadoop MapReduce with example

1. Objective

In Hadoop, Map-Only job is the process in which mapper does all task, no task is done by the reducer and mapper’s output is the final output. In this tutorial on Map only job in Hadoop MapReduce, we will learn about MapReduce process, the need of map only job in Hadoop, how to set a number of reducers to 0 for Hadoop map only job.

We will also learn what are the advantages of Map Only job in Hadoop MapReduce, processing in Hadoop without reducer along with MapReduce example with no reducer.

Learn how to install Hadoop 2 with Yarn on pseudo distributed mode.

2. What is Map Only Job in Hadoop MapReduce?

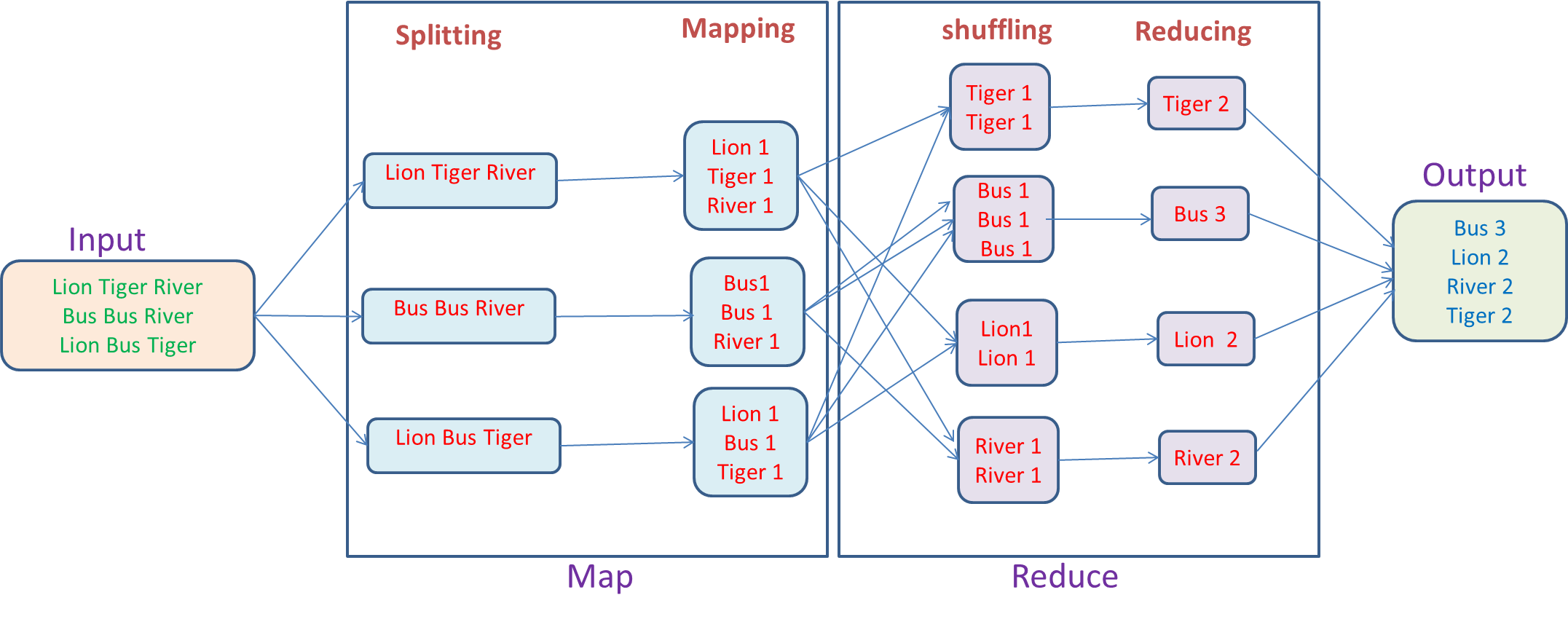

MapReduce is a software framework for easily writing applications that process the vast amount of structured and unstructured data stored in the Hadoop Distributed Filesystem (HDFS). Two important tasks done by MapReduce algorithm are: Map task and Reduce task. Hadoop Map phase takes a set of data and converts it into another set of data, where individual element are broken down into tuples (key/value pairs). Hadoop Reduce phase takes the output from the map as input and combines those data tuples based on the key and accordingly modifies the value of the key.

From the above word-count example, we can say that there are two sets of parallel process, map and reduce; in map process, the first input is split to distribute the work among all the map nodes as shown in a figure, and then each word is identified and mapped to the number 1. Thus the pairs called tuples (key-value) pairs.

In the first mapper node three words lion, tiger, and river are passed. Thus the output of the node will be three key-value pairs with three different keys and value set to 1 and the same process repeated for all nodes. These tuples are then passed to the reducer nodes and partitioner comes into action. It carries out shuffling so that all tuples with the same key are sent to the same node. Thus, in reduce process basically what happens is an aggregation of values or rather an operation on values that share the same key.

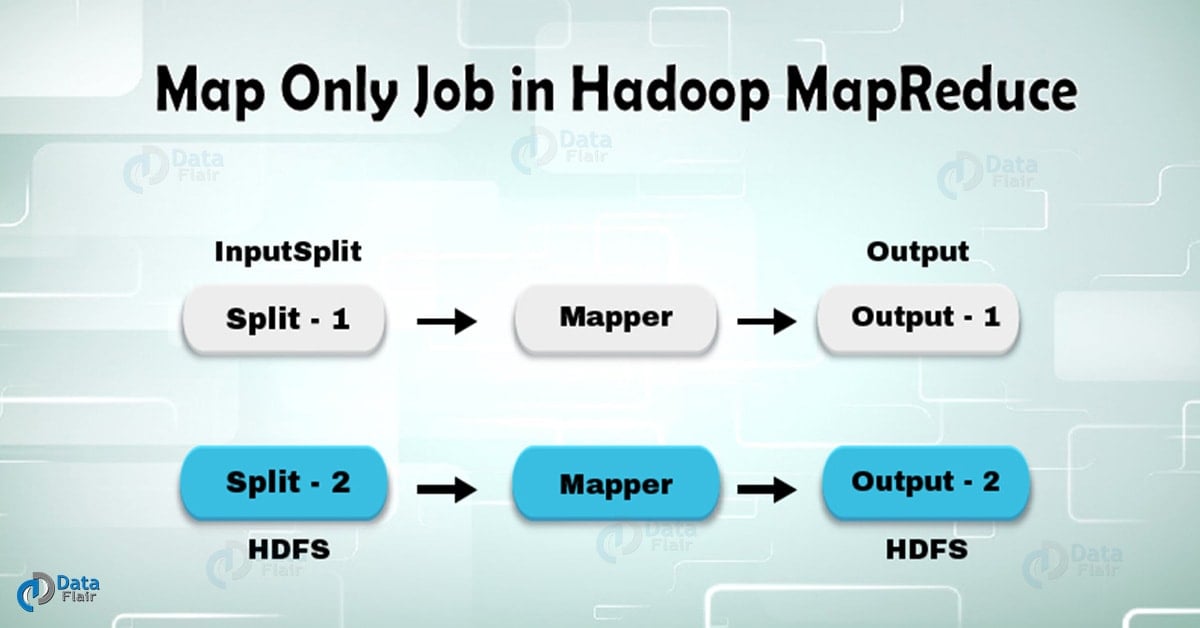

Now, let us consider a scenario where we just need to perform the operation and no aggregation required, in such case, we will prefer ‘Map-Only job’ in Hadoop. In Hadoop Map-Only job, the map does all task with its InputSplit and no job is done by the reducer. Here map output is the final output.

Refer this guide to learn Hadoop features and design principles.

3. How to avoid Reduce Phase in Hadoop?

We can achieve this by setting job.setNumreduceTasks(0) in the configuration in a driver. This will make a number of reducer as 0 and thus the only mapper will be doing the complete task.

4. Advantages of Map only job in Hadoop

In between map and reduces phases there is key, sort and shuffle phase. Sort and shuffle are responsible for sorting the keys in ascending order and then grouping values based on same keys. This phase is very expensive and if reduce phase is not required we should avoid it, as avoiding reduce phase would eliminate sort and shuffle phase as well. This also saves network congestion as in shuffling, an output of mapper travels to reducer and when data size is huge, large data needs to travel to the reducer. Learn more about shuffling and sorting in Hadoop MapReduce.

The output of mapper is written to local disk before sending to reducer but in map only job, this output is directly written to HDFS. This further saves time and reduces cost as well.

Also, there is no need of partitioner and combiner in Hadoop Map Only job that makes the process fast.

5. Conclusion

In conclusion, Map only job in Hadoop reduces the network congestion by avoiding shuffle, sort and reduce phase. Mapper takes care of overall processing and produces the output. We can achieve this by using the job.setNumreduceTasks(0).

Hope you liked this blog. If you have any query related to this blog, so feel free to share with us. We will be happy to solve them.

See Also-

Did you know we work 24x7 to provide you best tutorials

Please encourage us - write a review on Google