PySpark SparkConf – Attributes and Applications

In our last Pyspark tutorial, we saw Pyspark Serializers. Today, we will discuss PySpark SparkConf. Moreover, we will see attributes in PySpark SparkConf and running Spark Applications.

Also, we will learn PySpark SparkConf example. As we need to set a few configurations and parameters, to run a Spark application on the local/cluster for that we use SparkConf. So, to learn to run SparkConf using PySpark, this document will help.

So, let’s start PySpark SparkConf.

What is PySpark SparkConf?

We need to set a few configurations and parameters, to run a Spark application on the local/cluster, this is what SparkConf helps with. Basically, to run a Spark application, it offers configurations.

- Code

For PySpark, here is the code block which has the details of a SparkConf class:

class pyspark.SparkConf ( loadDefaults = True, _jvm = None, _jconf = None )

Basically, with SparkConf() we will create a SparkConf object first. So, that will load the values from spark. Even Java system properties. Hence, by using the SparkConf object, now we can set different parameters and their parameters will take priority over the system properties.

However, there are better methods, which support chaining, in a SparkConf class. Let’s say, we can write conf.setAppName(“PySpark App”).setMaster(“local”). Though, it cannot be modified by any user once we pass a SparkConf object to Apache Spark.

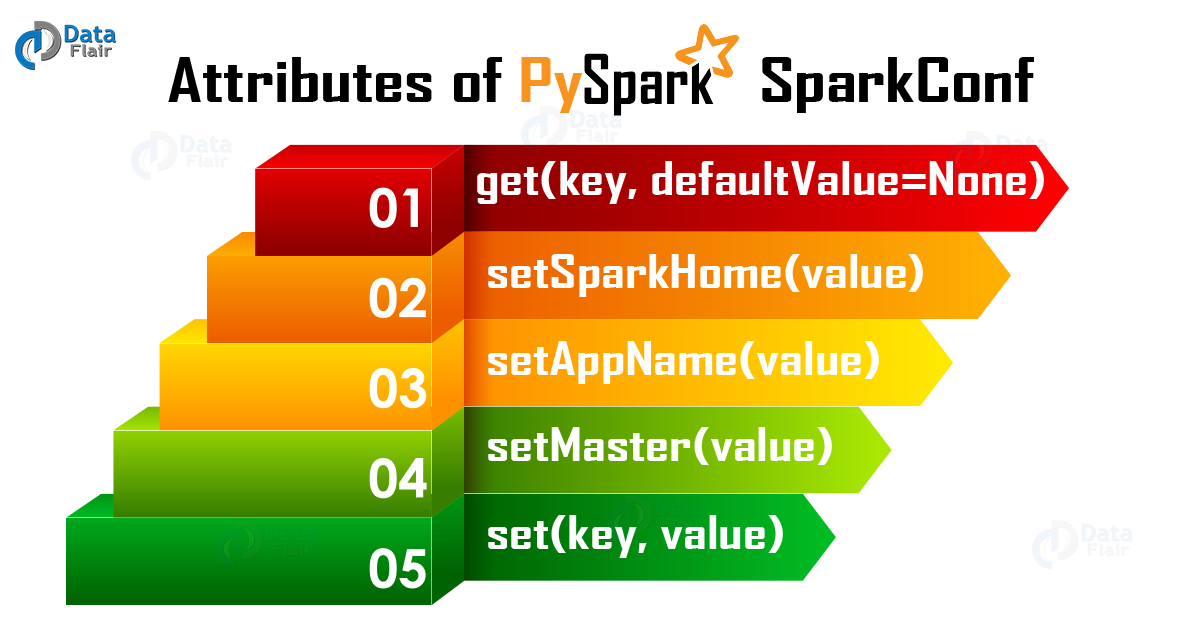

Attributes of PySpark SparkConf

Thus here are the most commonly used attributes of SparkConf:

i. set(key, value)

It helps to set a configuration property.

ii. setMaster(value)

In order to set the master URL, we use it.

iii. setAppName(value)

We use it to set an application name.

iv. get(key, defaultValue=None)

It helps to get a configuration value of a key.

v. setSparkHome(value)

In order to set Spark installation path on worker nodes, we use it.

In the following code, we can use to create SparkConf and SparkContext objects as part of our applications. Also, using sbt console on base directory of our application we can validate:

from pyspark import SparkConf,SparkContext

conf = SparkConf().setAppName("Spark Demo").setMaster("local")

sc = SparkContext(conf=conf)Running Spark Applications Using SparkConf

In addition, here are some different contexts in which we can run spark applications:

- local – conf

SparkConf.setAppName(“Spark Demo”).setMaster(“local”)

- yarn-client – conf

SparkConf.setAppName(“Spark Demo”).setMaster(“yarn-client”)

- mesos URL

- spark URL – conf

SparkConf.setAppName(“Spark Demo”).setMaster(“spark master URL”)

- Code snippet to get all the properties

for i in sc.getConf.getAll: print(i)

So, this was all about Pyspark SparkConf. Hope you like our explanation.

Conclusion

Hence, we have learned all about PySpark SparkConf, including its code which will help to create one. Moreover, we discussed different attributes of PySpark SparkConf and also running Spark applications. Still, if any doubt, comment below.

Your 15 seconds will encourage us to work even harder

Please share your happy experience on Google