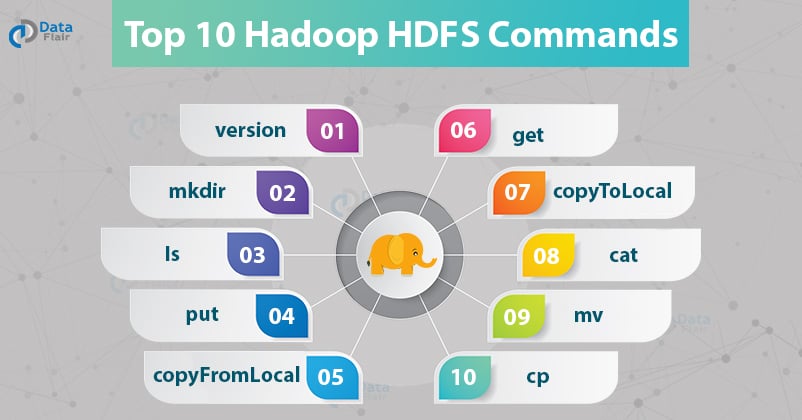

Top 10 Hadoop HDFS Commands with Examples and Usage

Explore the most essential and frequently used Hadoop HDFS commands to perform file operations on the world’s most reliable storage.

Hadoop HDFS is a distributed file system that provides redundant storage space for files having huge sizes. It is used for storing files that are in the range of terabytes to petabytes.

Hadoop HDFS Commands

With the help of the HDFS command, we can perform Hadoop HDFS file operations like changing the file permissions, viewing the file contents, creating files or directories, copying file/directory from the local file system to HDFS or vice-versa, etc.

Before starting with the HDFS command, we have to start the Hadoop services. To start the Hadoop services do the following:

1. Move to the ~/hadoop-3.1.2 directory

2. Start Hadoop service by using the command

sbin/start-dfs.sh

In this Hadoop Commands tutorial, we have mentioned the top 10 Hadoop HDFS commands with their usage, examples, and description.

Let us now start with the HDFS commands.

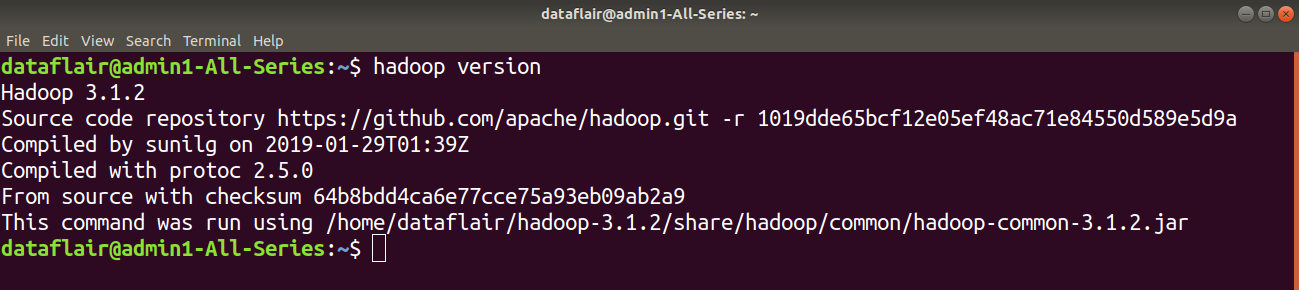

1. version

Hadoop HDFS version Command Usage:

version

Hadoop HDFS version Command Example:

Before working with HDFS you need to Deploy Hadoop, follow this guide to Install and configure Hadoop 3.

hadoop version

Hadoop HDFS version Command Description:

The Hadoop fs shell command version prints the Hadoop version.

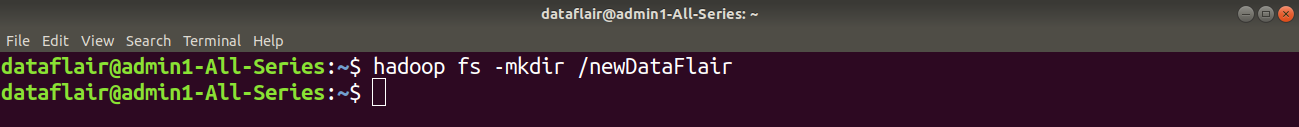

2. mkdir

Hadoop HDFS mkdir Command Usage:

hadoop fs –mkdir /path/directory_name

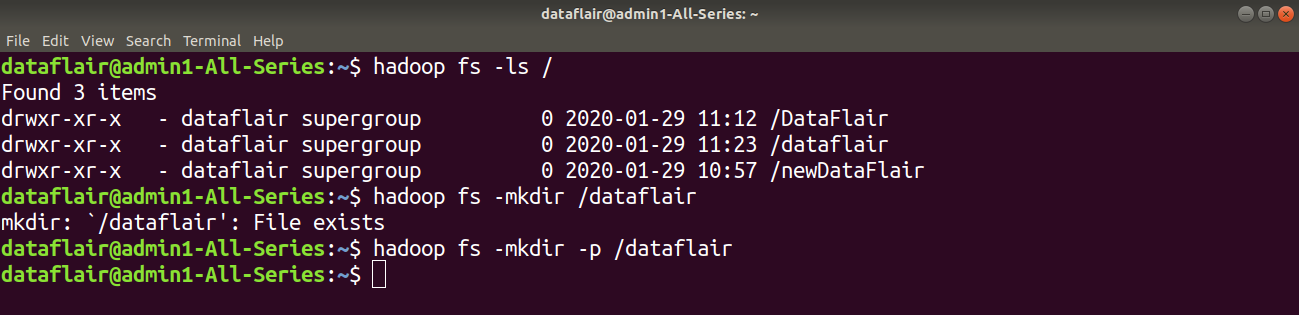

Hadoop HDFS mkdir Command Example 1:

In this example, we are trying to create a newDataFlair named directory in HDFS using the mkdir command.

Using the ls command, we can check for the directories in HDFS.

Example 2:

Hadoop HDFS mkdir Command Description:

This command creates the directory in HDFS if it does not already exist.

Note: If the directory already exists in HDFS, then we will get an error message that file already exists.

Use hadoop fs mkdir -p /path/directoryname, so not to fail even if directory exists.

Learn various features of Hadoop HDFS from this HDFS features guide.

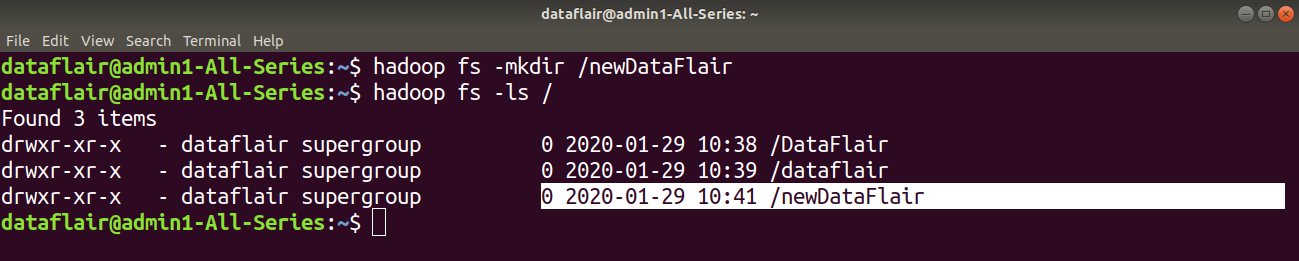

3. ls

Hadoop HDFS ls Command Usage:

hadoop fs -ls /path

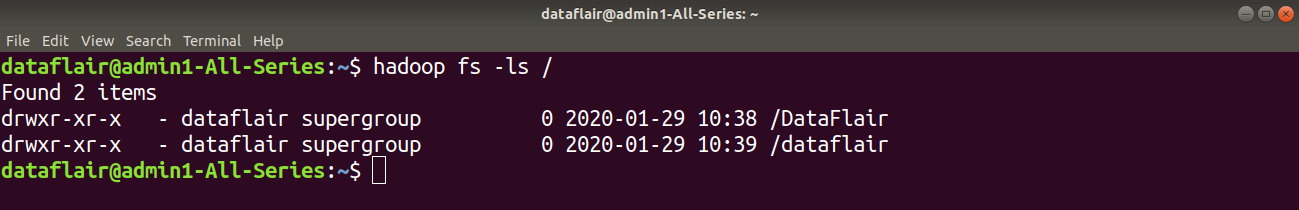

Hadoop HDFS ls Command Example 1:

Here in the below example, we are using the ls command to enlist the files and directories present in HDFS.

Hadoop HDFS ls Command Description:

The Hadoop fs shell command ls displays a list of the contents of a directory specified in the path provided by the user. It shows the name, permissions, owner, size, and modification date for each file or directories in the specified directory.

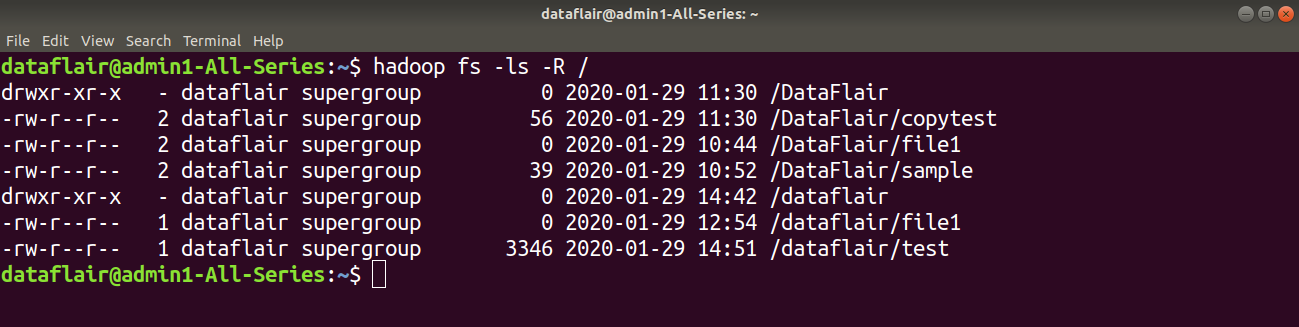

Hadoop HDFS ls Command Example 2:

Hadoop HDFS ls Description:

This Hadoop fs command behaves like -ls, but recursively displays entries in all subdirectories of a path.

4. put

Hadoop HDFS put Command Usage:

haoop fs -put <localsrc> <dest>

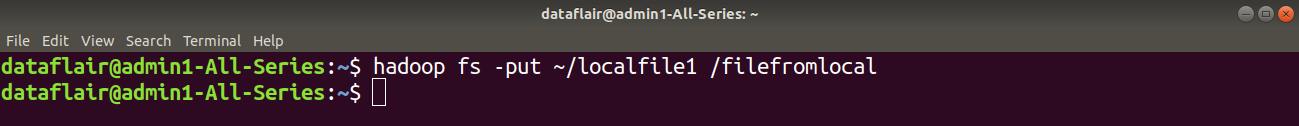

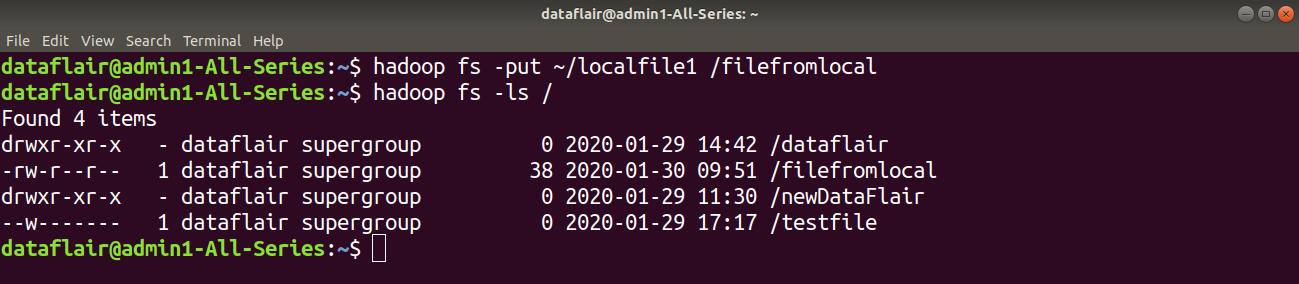

Hadoop HDFS put Command Example:

Here in this example, we are trying to copy localfile1 of the local file system to the Hadoop filesystem.

Hadoop HDFS put Command Description:

The Hadoop fs shell command put is similar to the copyFromLocal, which copies files or directory from the local filesystem to the destination in the Hadoop filesystem.

5. copyFromLocal

Hadoop HDFS copyFromLocal Command Usage:

hadoop fs -copyFromLocal <localsrc> <hdfs destination>

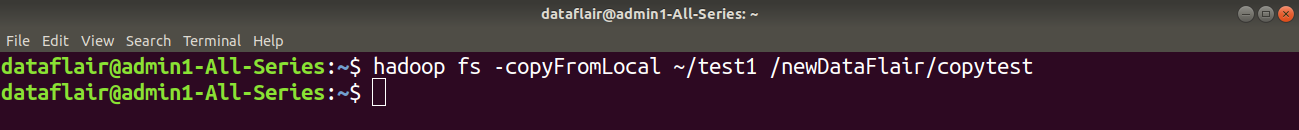

Hadoop HDFS copyFromLocal Command Example:

Here in the below example, we are trying to copy the ‘test1’ file present in the local file system to the newDataFlair directory of Hadoop.

Hadoop HDFS copyFromLocal Command Description:

This command copies the file from the local file system to HDFS.

Learn Internals of HDFS Data Read Operation, How Data flows in HDFS while reading the file.

Any Doubt yet in Hadoop HDFS Commands? Please Comment.

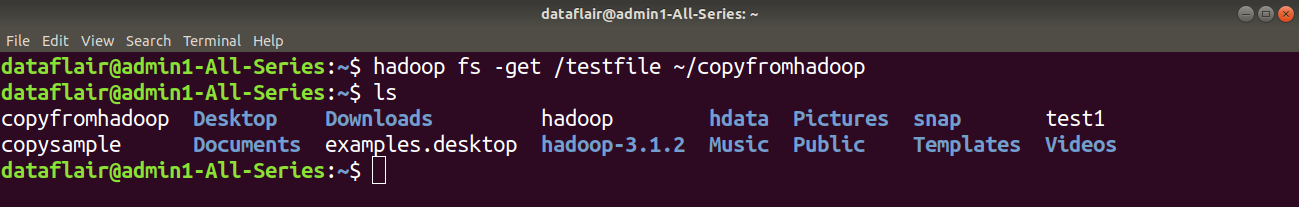

6. get

Hadoop HDFS get Command Usage:

hadoop fs -get <src> <localdest>

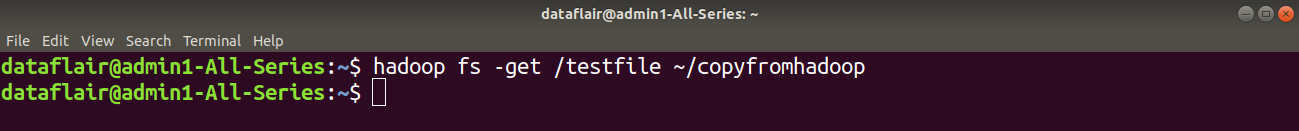

Hadoop HDFS get Command Example:

In this example, we are trying to copy the ‘testfile’ of the hadoop filesystem to the local file system.

Hadoop HDFS get Command Description:

The Hadoop fs shell command get copies the file or directory from the Hadoop file system to the local file system.

Learn: Rack Awareness, High Availability

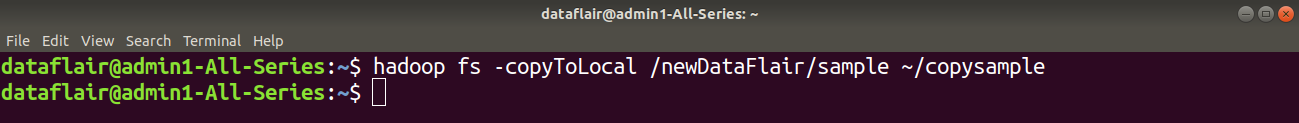

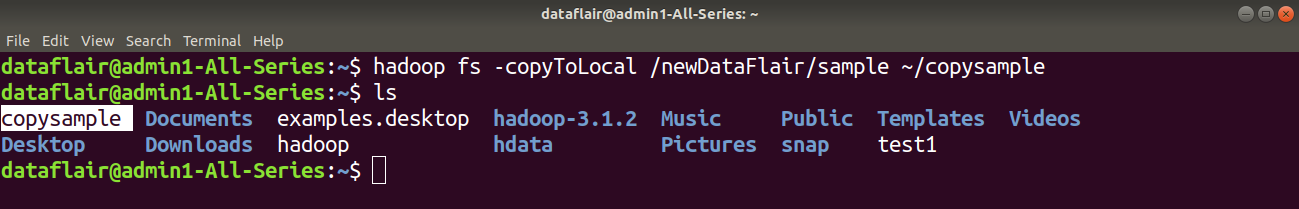

7. copyToLocal

Hadoop HDFS copyToLocal Command Usage:

hadoop fs -copyToLocal <hdfs source> <localdst>

Hadoop HDFS copyToLocal Command Example:

Here in this example, we are trying to copy the ‘sample’ file present in the newDataFlair directory of HDFS to the local file system.

We can cross-check whether the file is copied or not using the ls command.

Hadoop HDFS copyToLocal Description:

copyToLocal command copies the file from HDFS to the local file system.

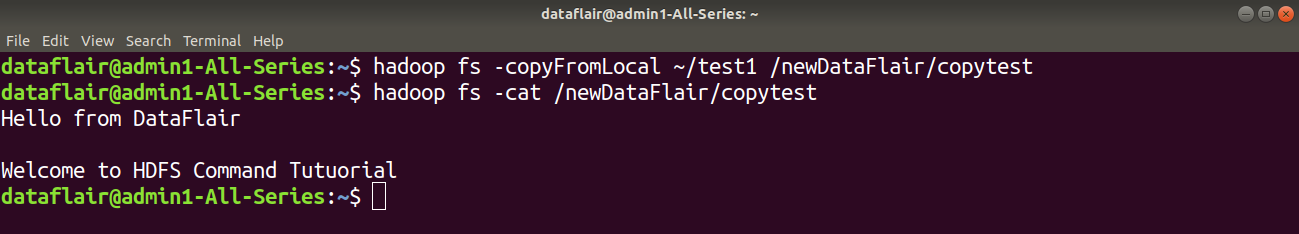

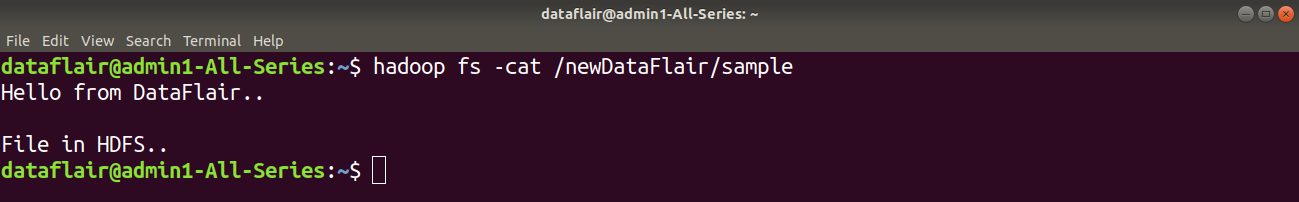

8. cat

Hadoop HDFS cat Command Usage:

hadoop fs –cat /path_to_file_in_hdfs

Hadoop HDFS cat Command Example:

Here in this example, we are using the cat command to display the content of the ‘sample’ file present in newDataFlair directory of HDFS.

Hadoop HDFS cat Command Description:

The cat command reads the file in HDFS and displays the content of the file on console or stdout.

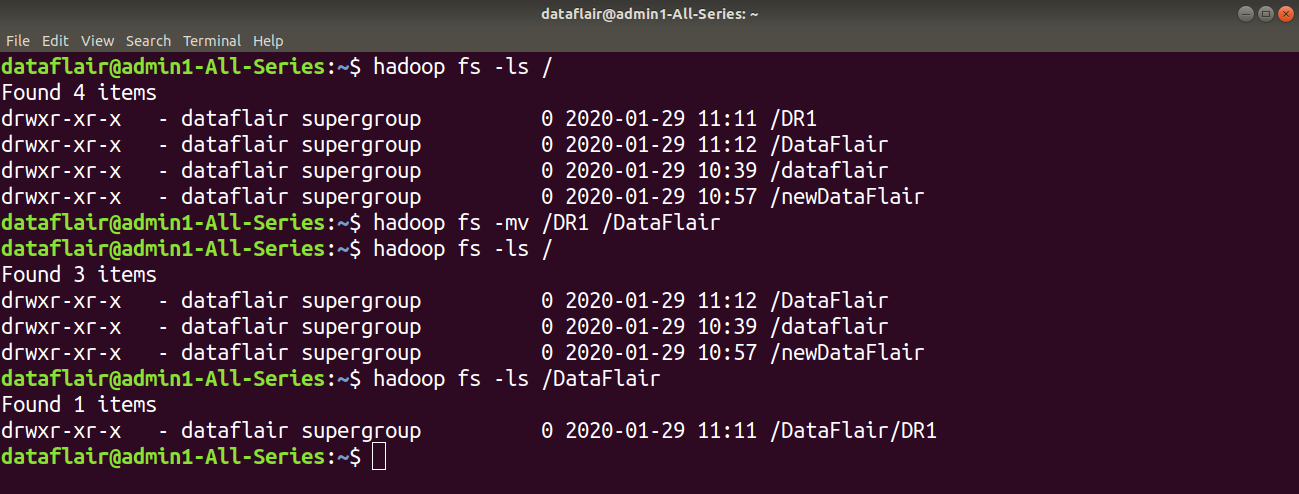

9. mv

Hadoop HDFS mv Command Usage:

hadoop fs -mv <src> <dest>

Hadoop HDFS mv Command Example:

In this example, we have a directory ‘DR1’ in HDFS. We are using mv command to move the DR1 directory to the DataFlair directory in HDFS.

Hadoop HDFS mv Command Description:

The HDFS mv command moves the files or directories from the source to a destination within HDFS.

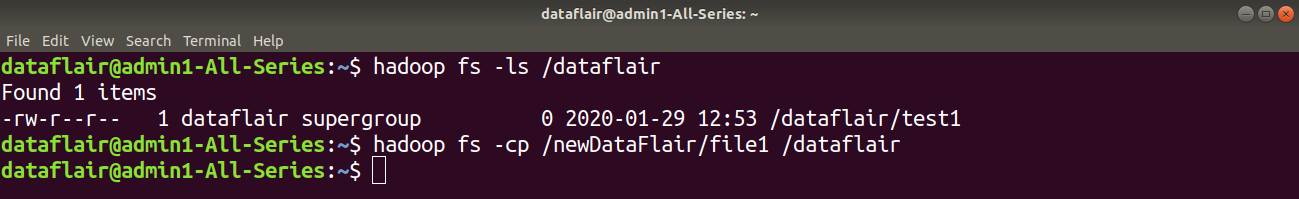

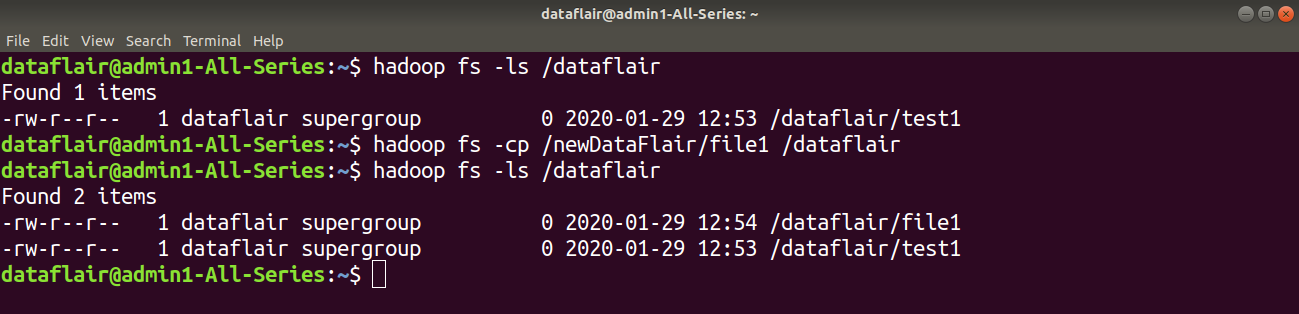

10. cp

Hadoop HDFS cp Command Usage:

hadoop fs -cp <src> <dest>

Hadoop HDFS cp Command Example:

In the below example we are copying the ‘file1’ present in newDataFlair directory in HDFS to the dataflair directory of HDFS.

Hadoop HDFS cp Command Description:

The cp command copies a file from one directory to another directory within the HDFS.

So this was all on Hadoop HDFS Commands. Hope you like it.

What’s Next

In case of any queries or feedback regarding Hadoop HDFS Commands feel free to let us know it in the comment section and we will get back to you.

Keep Practicing!!

We work very hard to provide you quality material

Could you take 15 seconds and share your happy experience on Google

Comment part II III IV are not accesible. have you removed those pages

No,

Part II III IV are working…

please check your browser

Thank you, Ismail, for checking the links and helping Nandhini. I hope you read the complete Hadoop HDFS Command Tutorial. Try our more Hadoop articles for better learning and keep helping others.

Good luck.

Hii Nandhini,

Please check your browser or internet connectivity once. All links are fine. Try again.

Still, if you find any problem, please let us know.

what is the major difference between copy from local and copy to local…????

copyFromLocal lets you copy Local File system to HDFS simlarly, copyToLocal lets you copy from HDFS to LFS. Hope that answers

the major diffrence is when you use copyFromLocal it will copy file from your local machine to HDFS architecture and when you use copyToLocal it will your file from HDFS architecture and place it on local machine

diff b/w put and copyFromLocal in cammand

What is the difference between hadoop hdfs put and copyFromLocal in command

no difference,both are same

If both are same means. why two commands?

we can mention multiple sourceDir in put command but in copyFromLocal we can not give more than one source dir ..

put command basically from linux origin and it is similar to the copyFromLocal , but there is small diff. suppose you copying any from from local to hdfs then and somehow during the copying the data task gets failed at 95% . if you are using the copyFromLocal, it 95% data will be available into the hdfs you only need to copy rest 5 % , while in put command you must copy entire data again.

Hope this make sense to you

Need bit more description on each command. whatever is provided as of now is not sufficient for clarification.

how to install any package in hadoop

Hi, Very good explanation!! I have doubt! Why do we need java to write/read a file in hdfs when we have copyFromLocal / CopyTo Local commands are there. and please explain the program if posiible.

how to tell whether a given path is of ] local file system or Hadoop file system